Databao

Agentic platform with modular AI tools and a governed semantic layer for any data stack

Three ways to connect an AI agent to your business data

There are several ways to connect an AI agent to your data. Each approach solves a different problem and comes with its own trade-offs, what you’re really choosing between is speed and reliability. In this post, we’ll explore how to balance the two to get the best results for your team and use case.

Today, many teams are looking to use AI agents to interact with their data through natural, conversational interfaces. Instead of writing queries or building dashboards, users can ask questions like “How did my week-2 conversion change compared to last week?” and get immediate, contextual answers.

Giving direct access: The faster but more fragile approach

The simplest setup is to connect an agent directly to your database. You give it access to your schema, provide it with some documentation, and let it write SQL on the fly. You can ask it a question in plain English, and it will generate a query or use an MCP server to give you an answer.

With this setup, you can be up and running in a few hours.

But the answers you receive are only as good as the agent’s interpretation of the data. It won’t really know your metrics and their specific definitions, so it will have to make an educated guess. Sometimes it will guess well, but often it won’t. The numbers might still look reasonable, though, and this problem could be solved by providing additional context via text files or tools like a Confluence MCP server. But without a single source of truth and predefined guardrails, there’s no guarantee that the agent will generate the right queries.

This is why these setups are typically limited to data teams, since someone will always need to double-check the results.

Building a formal semantic layer: The reliable, but slow to build approach

A more structured approach is to define a semantic layer. You model your metrics in tools like dbt or Cube. The agent no longer queries raw tables; it now works with predefined metrics. With query engines, SQL generation becomes more reliable because it follows predefined logic and metric definitions instead of making assumptions.

This alone improves many aspects of performance, since once the logic is encoded, answers become consistent, and the agent is no longer second-guessing what “revenue”, “churn”, or “subscriber growth” mean.

But in this case, the trade-off is time.

Building a proper semantic layer takes months. Every metric must be defined, reviewed by your data team, and maintained. As your business grows and your data evolves, new logic and metrics will inevitably be needed. A large part of your work will now be focused on maintaining and keeping your semantic layer up to date.

This approach yields more reliable answers because queries are based on predefined metrics. However, it requires ongoing effort to build and maintain the layer. Over time, data teams spend less time answering questions directly and more time maintaining these definitions.

The agent can’t do this work on its own, as it relies on humans updating the semantic layer to ensure it can answer questions consistently.

Building an automated semantic layer: Avoiding trade-offs

A third approach is to build an automated semantic layer that can learn and maintain itself as usage grows.

Instead of defining every metric upfront, the system will build it from your existing dbt projects and data sources. And every time someone asks a question, new metrics will be created on the fly in that semantic layer, generating new PRs in Git that data teams can then review and approve.

This way, the layer is generated from your existing data, helping you avoid the usual cold-start problem and the need to define all your metrics before anyone can use the system.

As questions come in, the agent proposes new metrics, which are in turn reviewed by your data team. This keeps the system aligned with real usage, while ensuring that definitions stay consistent and trusted. Business users can interact with the data earlier, and the metrics evolve based on actual needs.

Databao is built around this principle, bringing together automated semantic layer generation and human-in-the-loop validation so teams can scale usage without sacrificing consistency or trust.

What actually matters

At a glance, all three approaches address a similar need: enabling business users to ask their data questions in natural language.

But the hard part is not generating answers – it’s making sure those can be trusted. Silent errors, when numbers look right but are based on the wrong definition, are the hardest to catch and can often prove to be the most damaging. That’s why the structure behind the agent matters more than the interface itself.

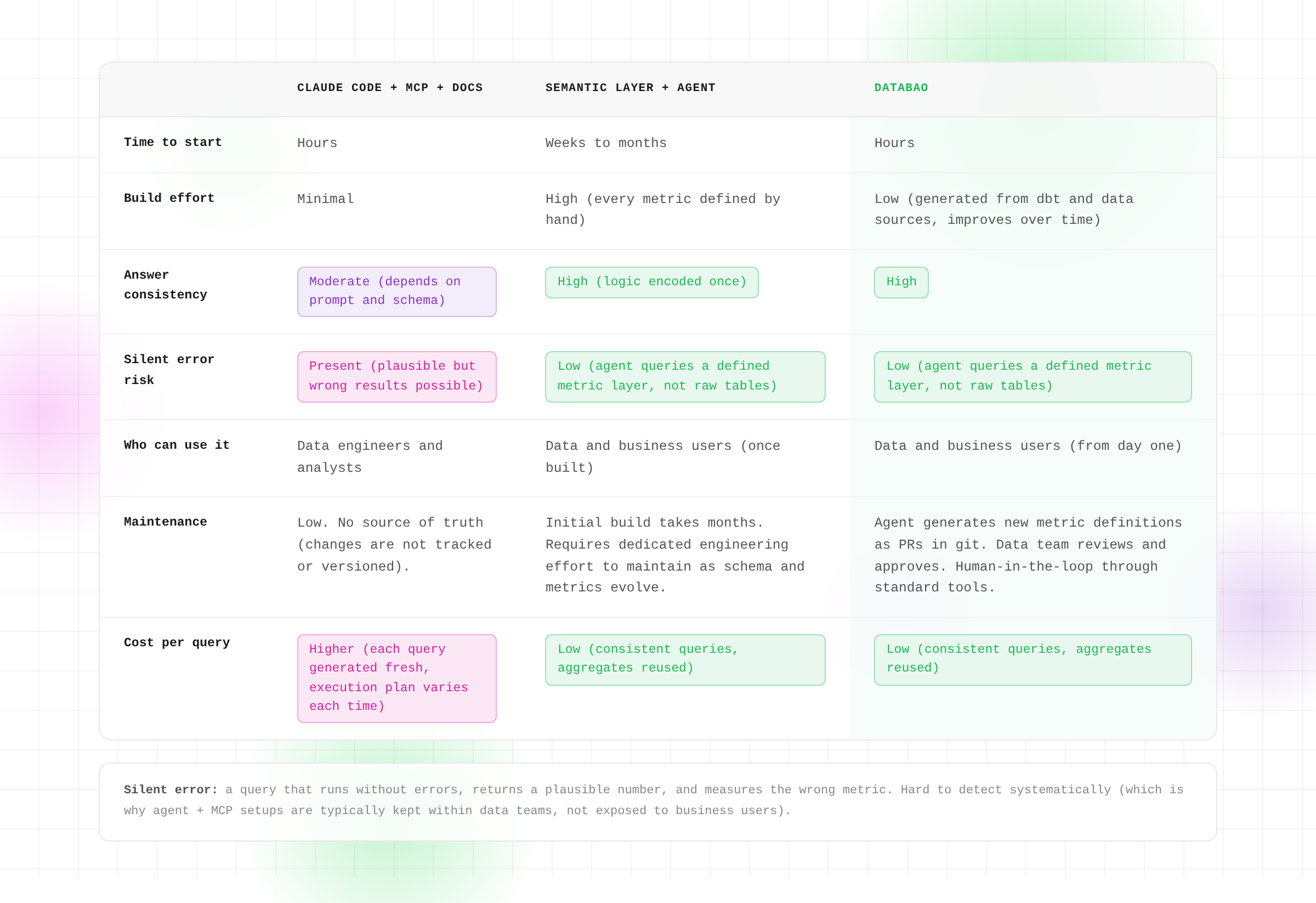

If we sum it all up:

- Direct access is fast, but it requires constant verification.

- A formal semantic layer is reliable but slow to build.

- An automated layer tries to strike a balance between both, with built-in review processes that ensure the agent has context, without placing all the burden of providing it on humans.

There isn’t a perfect option, and the right choice depends on where you are in your data journey.

If you’re exploring, speed might matter more. But if you’re scaling usage across a company, consistency becomes critical.

About Databao

If you’d like to try enabling self-service analytics through an automated semantic layer, you can integrate Databao into your workflow and join us in building a proof of concept together. We’ll work with you to understand your use case, define a context-building process, and give the agent access to a select group of business users. Together, we’ll evaluate the quality of the responses and your overall satisfaction with the results.