Qodana

The code quality platform for teams

The Ethics of AI Code Review

As AI technology continues to mature, its application grows wider too. Code review tools are one of the fastest growing use cases for AI in software development. They facilitate faster checks, better consistency, and the ability to catch critical security issues humans might miss.

The 2025 Stack Overflow Developer Survey reveals that 84% of developers are now using or planning to use AI tools in their development process, including as part of code reviews. This is up from 76% in 2024. But as these tools grow more sophisticated, the question of accountability becomes more important.

When an AI code review tool suggests a change and a developer accepts it, who’s responsible if that change introduces a bug? It’s not just a theoretical question. Development teams face this issue every time they integrate an AI code review process into their workflow.

The conundrum isn’t just about whether the quality of AI code review is good enough. It’s about understanding the ethical questions that need to be considered when AI tools make recommendations that humans implement.

So, just how ethical is code review carried out by AI, and what steps should developers take to ensure that, where it’s utilized, this form of review is integrated ethically? Let’s take a closer look.

The rise of automated code review

Code review automation has come a long way over the past decade, as machine review has grown to work alongside traditional peer reviews through methods including static code analysis. And now, AI-powered systems that learn from millions of code examples have joined the party, streamlining processes and providing further automation.

Code review automation falls into two distinct approaches. Rule-based static code analysis checks your code against predefined standards, while AI-powered systems learn patterns from large code repositories.

It’s the ethical questions raised by the latter that make for interesting conversations.

Understanding the differences between these approaches helps your team make informed decisions about which to choose. Here’s a brief breakdown of the key differences between the two analysis methods:

| Rule-Based Static Analysis | AI-Powered Analysis | |

|---|---|---|

| How it works | Checks code against predefined rules and standards | Learns patterns from large code repositories |

| Transparency | Shows the exact rule violated | Makes recommendations based on learned patterns |

| Consistency | Provides the same results every time for the same code | Can vary based on model training and updates |

| Context understanding | Limited to codified rules | Can recognize complex patterns across codebases |

| Training required | None – rules are predetermined | Requires large datasets of code examples |

| Best for | Enforcing team standards, catching known issues | Identifying subtle patterns, style suggestions |

Of course, this technology is advancing quickly and various tools are incorporating new functionality.

What are the benefits of AI code review?

AI-powered code review represents a genuine advancement in development workflows. What were experimental tools just a few years ago are now production-ready systems that many development teams rely on daily. The benefits are undeniable for organizations of all sizes.

Higher volumes, same results

AI code review allows you to process thousands of lines of code in seconds without the fatigue or variable attention that can affect human reviewers. AI tools maintain the same level of scrutiny on the 500th pull request as they did on the first, eliminating inconsistency and often helping to overcome issues such as deadline pressure that can lead to missed problems.

Keep everything secure

AI tools can identify vulnerability patterns across different languages and frameworks, often catching security vulnerabilities like insecure deserialization, XML external entity (XXE) attacks, and improper authentication handling before they reach production, eliminating the potential issues these can cause. That being said, it’s important to mention that they often cause security issues too.

Reducing bias

With AI code review, teams can apply identical standards to every code submission, no matter who wrote it, when it was submitted, or how much political capital the author has in the organization. This removes the subtle (and not-so-subtle) biases that can creep into human code review, such as senior developers’ code receiving lighter scrutiny.

Faster feedback

Rather than having to wait days for review feedback, AI code review means developers can get input while the context is still fresh – often within minutes.

This tight feedback loop means issues get fixed while the developer still has the mental model loaded, reducing the cognitive cost of having to switch back to yesterday’s or last week’s code after moving on to something new.

What are the challenges and limitations of AI code review?

AI code review tools are powerful, but they’re not magic, and treating them as infallible creates its own problems. Understanding where these tools have limitations helps your team use them effectively rather than either over-trusting their recommendations or dismissing them entirely.

Context blindness

Tools can miss project-specific intent, architectural decisions, or business requirements not reflected in the code itself. A technically correct suggestion might break an undocumented but critical assumption.

Automation bias

There’s always a risk with any tool that developers can over-trust them. Automated code review is no different, with a danger that team members accept AI suggestions without properly evaluating them. When a tool has been right 95% of the time, it’s easy to skip careful review on that problematic 5%.

Dataset limitations

Models trained on narrow datasets can reinforce certain coding styles while missing framework-specific best practices. An AI tool trained mostly on open-source JavaScript, for example, might be less reliable when reviewing enterprise Java or Go microservices.

AI automation ethics: Who is responsible and accountable?

The big question when it comes to AI code review tools is all about who is responsible for the output.

As an example, let’s say an AI code review tool flags a function as inefficient and suggests optimizing it. When a developer reviews this, they may think it looks reasonable and simply accept the change.

The code then ships to production. However, under high load, the “optimization” may cause a race condition that briefly exposes customer data. This can lead to a need for more time spent fixing problems, leading to a drop in production.

Who’s accountable in cases like this? Is the developer responsible for accepting the recommendation without fully understanding it? Is the code reviewer accountable for not catching what the AI missed? Does responsibility fall on the organization for deploying these tools without proper governance? Should the vendor share liability for providing recommendations without sufficient context? Or is it the responsibility of everyone involved?

These questions mirror larger debates about AI accountability across all sectors. Kate Crawford’s research examines how AI systems often serve and intensify existing power structures, with design choices made by a small group affecting many. Her book Atlas of AI shows these systems aren’t neutral tools, but reflections of specific values and priorities.

Timnit Gebru’s work on algorithmic bias shows how limitations in training data can create measurable harm. Her groundbreaking Gender Shades study showed facial recognition systems were significantly less accurate at identifying certain groups because of over-representation of others. The same principle applies to code review – if AI models are trained on narrow slices of the programming world, they’ll be less effective when applied to different and wider contexts.

The Center for Human-Compatible AI, led by Stuart Russell, emphasizes that AI systems should maintain uncertainty about objectives rather than rigidly chasing goals. This applies directly to AI code review. Tools that are absolutely “certain” about their recommendations, without acknowledging where the training or reasoning might be limited, are more dangerous than those expressing appropriate uncertainty.

Transparency and bias in automated review systems

As AI code review tools become more widely adopted, vendors face growing ethical obligations to disclose model limitations and explain decision rationale.

Code review models as “black boxes”

Many AI code review systems offer limited visibility into how they prioritize issues or generate suggestions. Unlike rule-based static analysis tools that cite the specific standards they’re checking against, AI models often provide recommendations based on learned patterns without clear explanation. A developer who sees “this function could be refactored” won’t necessarily know whether that’s based on performance patterns, readability heuristics, or something else entirely.

This opacity makes it difficult to decide whether a suggestion is genuinely valuable or shows a misunderstanding of context. When users don’t understand a system or have visibility into its internal workings, this is known as a “black box”. Without transparency in AI code review systems, developer teams are essentially asked to trust this black box, which is nearly impossible without more information.

Inherited bias from training data

AI models trained on large code repositories can inherit biases from their training data, reinforcing certain programming conventions while missing framework-specific best practices.

If an AI code review tool is trained primarily on Python data science code, for example, it might suggest patterns optimized for notebook environments when reviewing production backend services, or recommend approaches that work for single-threaded scripts but cause problems in concurrent systems. This creates a hidden quality gap that teams may not recognize until after adoption.

Managing responsibility for AI code review

Ethical AI code review requires action from both developers and businesses that make their tools. Teams need governance structures that ensure human oversight remains meaningful, and vendors need to commit to transparency to help teams make informed decisions.

Team responsibilities and governance

Teams adopting AI code review tools need to build governance around them from day one. Waiting until something goes wrong to establish accountability is too late. The most effective teams treat AI recommendations as input that informs human decision-making. Core practices include:

Establishing ownership: Every AI recommendation needs a human reviewer accountable for the decision to merge. No code should ship based solely on automated approval.

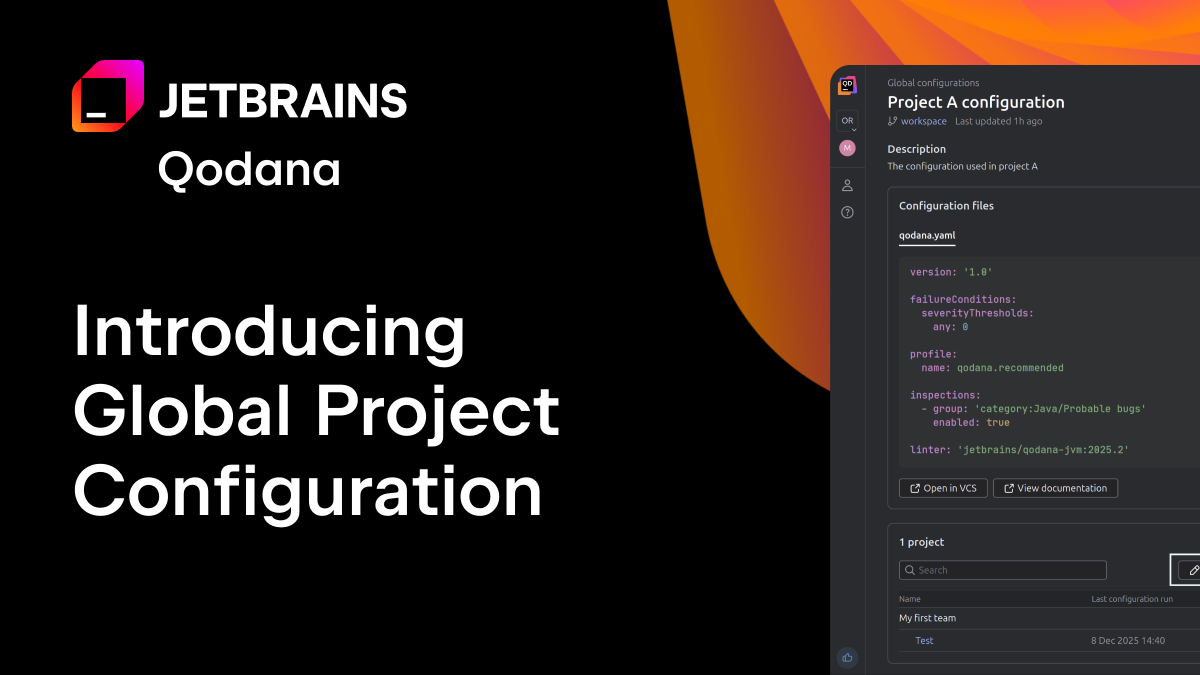

Documenting decision trails: Maintain audit logs distinguishing AI suggestions from human approvals. When problems emerge, you need to understand what the AI recommended and why a human reviewer chose to accept it.

Setting clear policies: Clearly define when to use AI recommendations. Should they be used for routine style checks or are they trusted with critical security reviews? Establish guidelines for testing suggestions locally and handling conflicts between AI and team knowledge.

Encouraging critical evaluation: Train developers to question AI outputs rather than blindly accepting them. Create a culture where challenging tool recommendations is seen as good engineering practice, not as something that slows delivery.

Promoting ongoing dialogue: Use retrospectives to discuss tool limitations and effectiveness. What patterns has the AI missed? Where has it been particularly helpful? This calibrates trust and identifies gaps that others can look out for.

Vendor obligations for ethical AI

Tool vendors building AI code review systems carry ethical obligations. Vendors need to be transparent about how models make decisions, honest about limitations, and facilitate support for meaningful human oversight. Specifically, vendors should:

Provide explainable recommendations. Clarify why a change was suggested, not just what to change. Instead of “consider refactoring this function,” explain “this function has high cyclomatic complexity (17), which typically correlates with more defects” to give users more context on which to base their decision to reject or accept.

Offer contextual confidence scores. Help developers understand which recommendations need more scrutiny. Context like “high confidence based on 10,000+ similar contexts” versus “low confidence – limited training data for this framework” can make all the difference to users.

Enable customizable alignment. Let teams adapt tools to their priorities. Security-focused teams might prioritize vulnerability detection over style, whereas performance-critical applications can put efficiency above readability.

Adopt open standards. Support regulatory frameworks like the EU AI Act. Commit to third-party auditing of models and transparency about training data sources and limitations.

Building accountability into automated workflows

Automation (or a hybrid approach) doesn’t absolve humans of responsibility. It just shifts how that responsibility is managed. As AI code review tools become more capable, the need for clear accountability frameworks becomes more urgent and code provenance will gain traction.

Teams must establish ownership structures, document decisions, and maintain healthy skepticism toward automated recommendations. At the same time, vendors will also need to prioritize transparency, disclose limitations honestly, and support meaningful oversight.

Different approaches to code review offer different trade-offs. Rule-based static analysis tools like Qodana give you transparent, deterministic inspections where every finding cites a specific rule. AI-powered tools offer pattern recognition across vast repositories. Many teams use both approaches, taking advantage of the strengths of each. And, no doubt we will incorporate some AI technologies going forward, especially Qodana becomes part of a new JetBrains agentic platform, and we develop our code provenance features.

But today, the question isn’t whether to use automation in code review. It’s about how we build systems of accountability that ensure automated tools enhance rather than undermine code quality. Ethical automation isn’t just about compliance. It’s about building trust in the systems that shape our code and, ultimately, the software that shapes our world.