JetBrains AI

Supercharge your tools with AI-powered features inside many JetBrains products

Stop Sending IDE-Catchable AI Code Errors to Review

AI coding tools might have handed your developers a productivity gain, but they’ve created a problem for your code review process. Pull request volume is up significantly, and the code arriving for review carries error patterns that weren’t common before generative AI. Yet it’s the same people with the same working hours who are in charge of reviewing it all.

Most engineering leaders are still working out what to do about it. According to our State of Developer Ecosystem 2025 survey of more than 24,000 developers, the dominant pattern is ad hoc: Developers simply use AI tools as they see fit with little governance from above.

Studies report that around 20%–25% of AI code hallucinations are detectable through automated structural and static analysis. Those checks can take place in the environment where the code was written, before a pull request is raised. No governance framework required, no new process layer.

The case is straightforward: your reviewers’ judgment is a finite resource. Every structural error that reaches review consumes some of it. Every structural error caught earlier doesn’t.

Code review is a decision process – AI just added more decisions

DX’s Q4 2025 data on 51,000 developers showed that daily AI users merge 60% more pull requests per week than light users. A 2025 randomized controlled trial across three enterprise companies found that developers who had access to an AI coding assistant completed 26% more tasks per week than those in the control group without access.

More code arriving at review means more decisions per reviewer per day. That pressure has a measurable cost. Decades before AI coding tools entered the picture, researchers found that review rate was a statistically significant factor in defect removal effectiveness, even after controlling for developer ability. More time spent per line of code reviewed was consistently associated with a greater number of defects found.

Skill alone couldn’t compensate for rushing. Better tooling should – but tools, including modern AI-assisted ones, have yet to close the gap between what a reviewer sees and what a reviewer needs to know:

- A 2024 study of a company’s AI code review tool found that even with 73.8% of automated review comments acted on, pull request closure time still increased 42%. The commentary was useful, but the burden was not reduced.

- In 2025, an empirical study of 16 AI code review tools across more than 22,000 comments discovered that their effectiveness varied widely.

- A January 2026 study revealed that effective review requires much more than a snapshot of what code was added or removed. Reviewers move between issue trackers, documentation, team discussions, and CI reports to understand what a change means in the codebase they are reviewing.

Review tools continue to leave it to developers to form the big picture. AI has added to that gap, not closed it.

AI is sending a different kind of code to review

A 2025 analysis of more than 500,000 code samples found that AI-generated code carries a distinct error profile: unused constructs, hardcoded values, and higher-risk security vulnerabilities that are more common than in human-written code. A separate 2025 study identified defect categories with no real equivalent in human-written code.

The error profile is challenging enough. But the way reviewers engage with AI-generated code compounds it. A 2026 study found a reviewers’ blind spot: AI-generated pull requests containing nearly twice the code redundancy drew fewer negative reactions from reviewers than human-written ones. Surface-level plausibility appeared to reduce critical engagement.

More volume. New error types. Less scrutiny. What does the delivery data show?

A 7.2% reduction in delivery stability for every 25% increase in AI adoption, according to DORA. They attributed this pattern partly to larger changesets: More code generated means bigger batches at review, and bigger batches have consistently predicted instability. Size is the signal. The defect profile and the scrutiny data suggest what is behind it.

Have machines catch what machines can

Automated structural and static checks don’t involve human judgment calls. But who is putting those checks in place? Even at organizations with mature engineering practices, structural screening didn’t emerge adequately at the individual level:

- Google, running LLM-powered code migrations across its codebase, found that reviewers needed to revert AI-generated changes often enough that the organization made a deliberate investment in automated verification to reduce that burden.

- Uber, processing tens of thousands of code changes weekly, found that AI-assisted development was overloading reviewers and built an automated review system that runs before human reviewers engage.

In both cases, the fix required an organizational decision. Google and Uber chose to do this at the pipeline level – upstream of pull requests.

The right development environment can catch the same category of errors earlier and requires no separate infrastructure.

Put “no-excuses” structural analysis before the pipeline

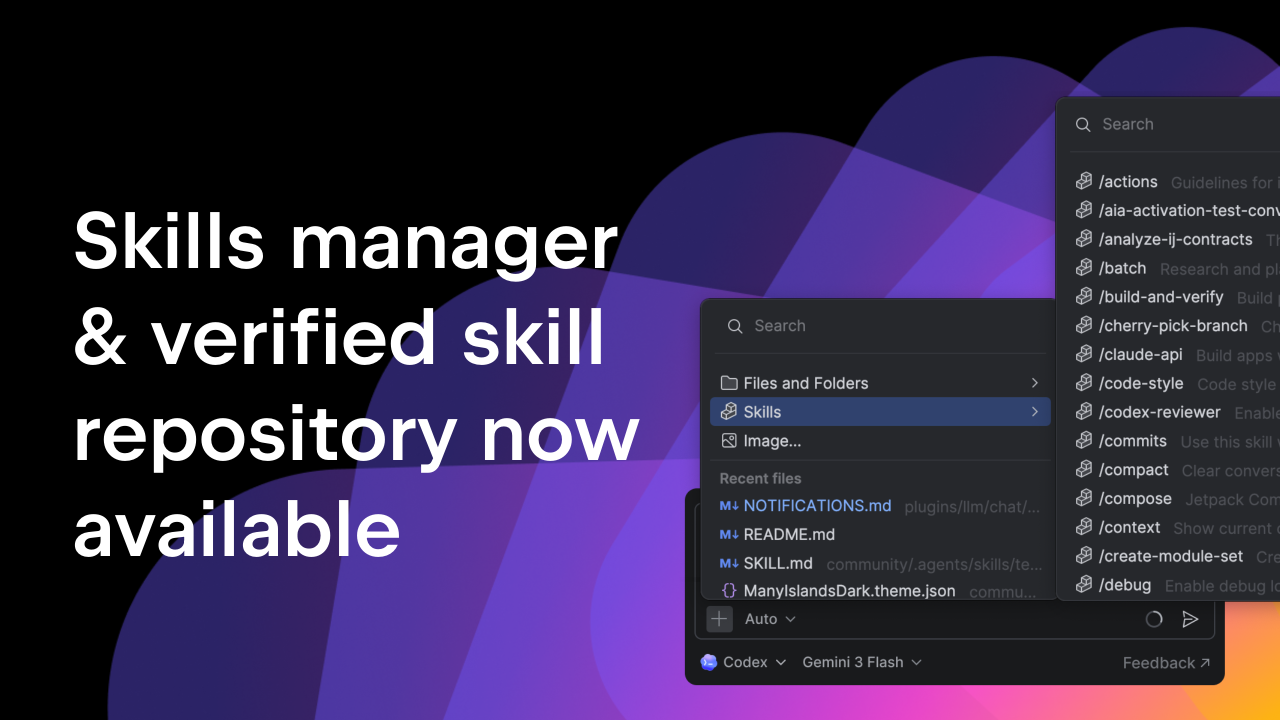

According to the 2025 Stack Overflow Developer Survey, developers use an average of 3.6 development environments. Which ones to use is typically their call. They know their languages and workflows.

As an engineering leader, you should know whether at least one of those environments is running deep, no-excuses checks of AI-generated code against what actually exists across the entire codebase in all languages. Many development environments do not; they rely on language-by-language approximations instead.

The distinction matters more at the organizational level than at the individual level. A developer working in a single language with a well-configured approximation-based setup may not feel the gap. But the quality of structural analysis across a team is only as consistent as the weakest setup in it.

The same studies on AI code hallucinations found that roughly 44% involve errors that no automated check reliably surfaces. That is more than enough for your reviewers to contend with. Protect their capacity for only what they can handle.

For every major language your team uses, there is a JetBrains IDE available to maintain a whole-project-resolved model of your codebase. Any code that lands in the editor – regardless of which AI tool produced it – is checked against that model. For teams that want enforcement both before and in the pipeline, Qodana extends that same inspection depth into CI/CD.

Your reviewers’ judgment is the resource. Structural screening is how you protect it.

See how JetBrains for Business supports that symbiosis at scale.