Scala Plugin

Scala Plugin for IntelliJ IDEA and Android Studio

Big Data Tools EAP 4: AWS S3 File Explorer, Bugfixes, and More

The holidays came early this year! Now, when I’ve actually looked at the calendar, I think it’s exactly on time. Whatever the case, we have some presents for you! Just today we’ve released a new update to our Big Data Tools plugin! We hope the update will make your workflow of working with Big Data a bit nicer and more convenient. The major new feature of this update is the integration of AWS S3.

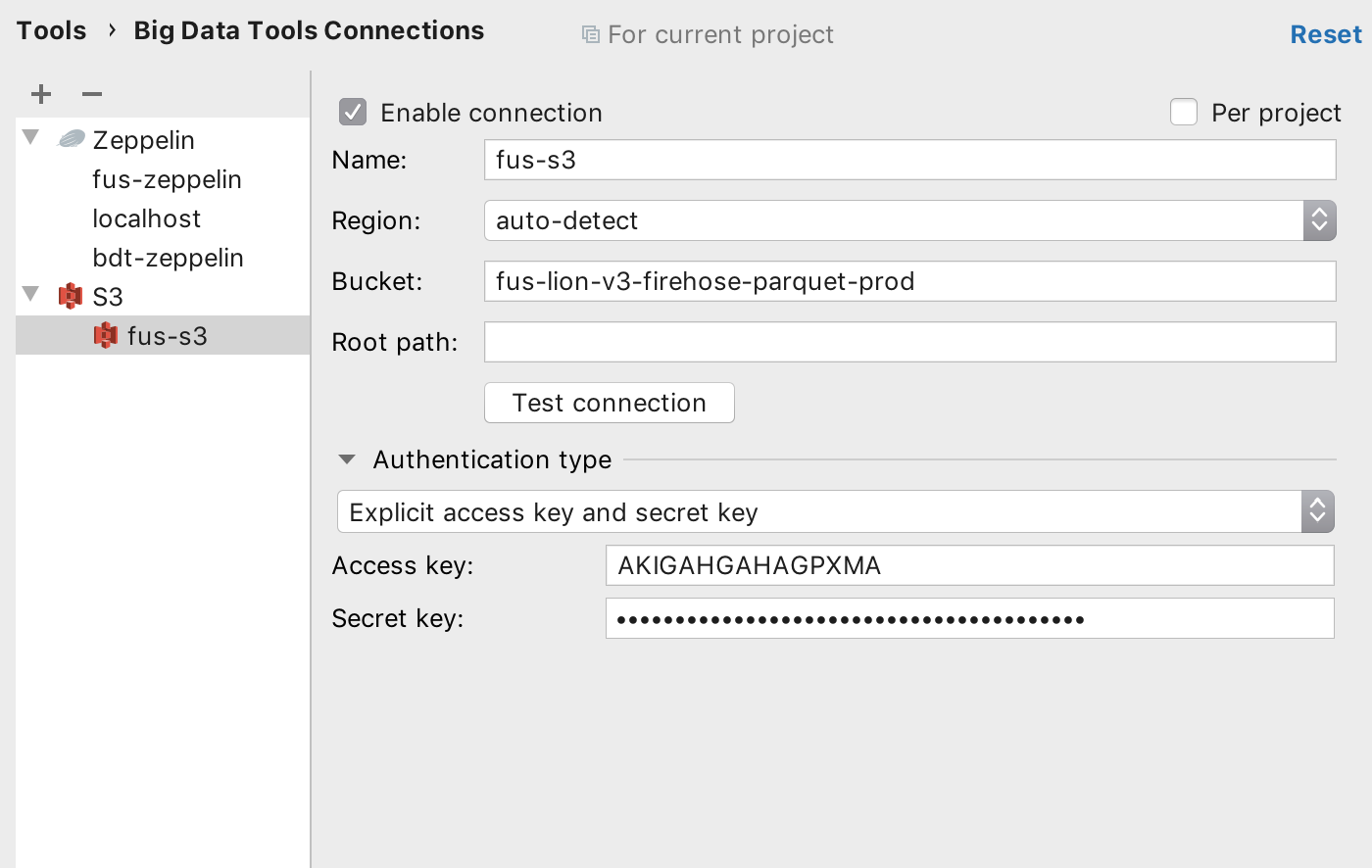

Now, in either the Big Data Tools tool window or the Big Data Tools Connections settings, you can configure an S3 bucket by providing the name of the bucket you’d like to access, the root path (in case you’d like to work with a limited set of files), and your AWS credentials:

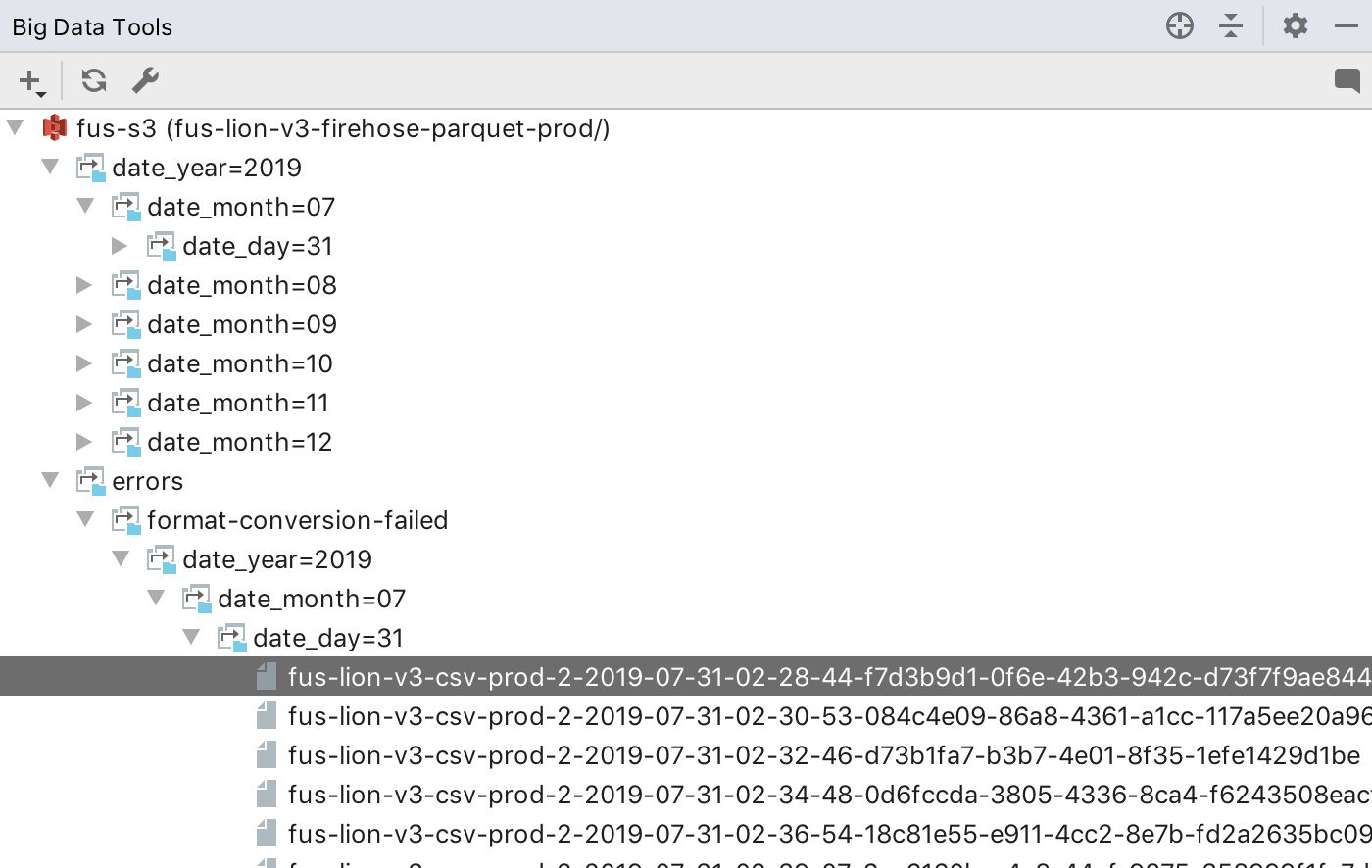

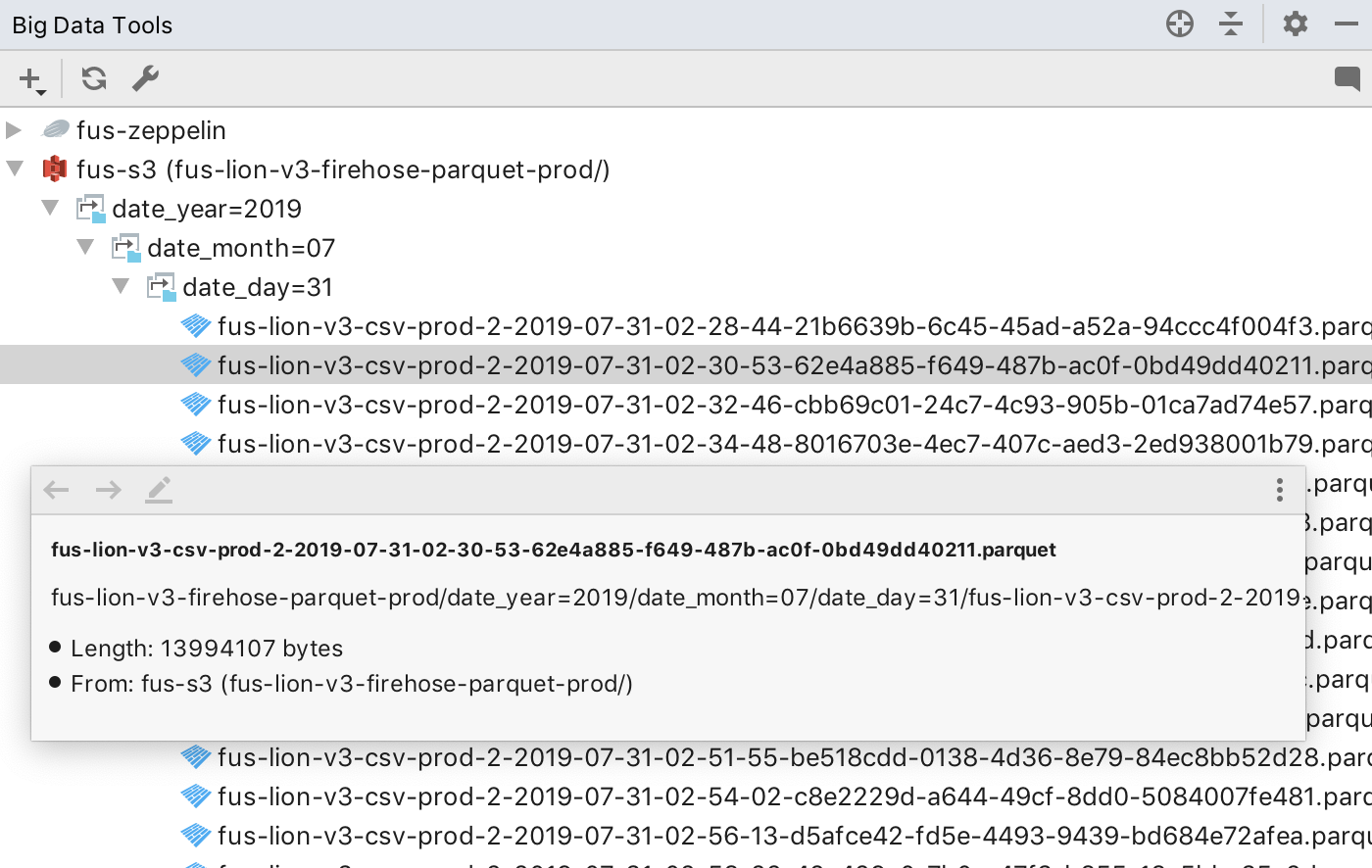

Once you’ve configured the bucket, you’ll be able to browse its contents in the Big Data Tools tool window:

The current integration allows you to browse the structure of folders, upload files to S3, as well as rename, move, delete, download files, and see additional information about the files:

While in our plans, current S3 integration doesn’t yet support:

- Opening a quick preview of files (including binary formats such as Parquet)

- Showing additional file information such as modification date or file size

- A more convenient way of browsing the content of folders with lots of files

- Additional features such as file filtering (e.g. by a prefix)

The good news is that we’re working on it!

In other news, this update brings important bugfixes related to unstable Zeppelin connections and the plugin plugin now correctly recognizes the interpreters that have custom names.

Last but not least, we’ve published another plugin update to the Super-Early-Bird channel. In order to see that update, you need to manually add this Super-Early-Bird plugin repository URL in the IDE plugin settings. This update has some very exciting experimental features. Stay tuned, and make sure that you’ve signed up to the Big Data Tools Slack channel!

That’s it for today. Please stay tuned. Tomorrow we’ll post another announcement where we’ll share more details about these experimental features and how to try them. A little teaser, it has something to do with Spark!

To see the complete list of bugfixes, refer to the release notes.

Keep the drive to develop!

Big Data Tools Team