TeamCity

Powerful CI/CD for DevOps-centric teams

AI in DevOps: Why Adoption Lags in CI/CD (and What Comes Next)

AI is everywhere except in CI/CD

Developers now use AI for nearly everything, except the part that actually ships code.

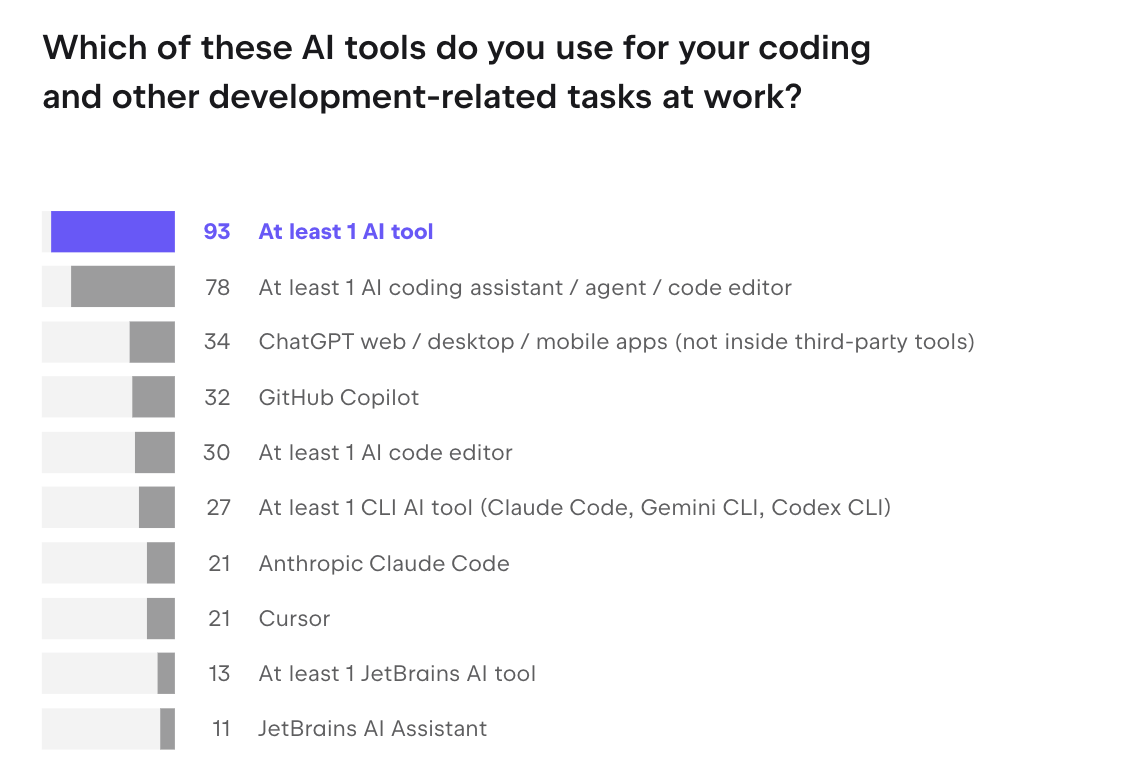

Recent surveys conducted by JetBrains indicate that AI is now widely used in software development, with workplace usage exceeding 90% and a large majority of developers already incorporating it into daily workflows. Developers rely on these tools for writing code, debugging, and navigating unfamiliar systems.

However, when you look at CI/CD pipelines, adoption is much more limited. This difference reflects how teams evaluate risk across the delivery lifecycle.

CI/CD pipelines are the stage where changes are validated and prepared for release. At this point, teams rely on consistent, reproducible signals. Introducing non-deterministic systems into this environment raises concerns around reliability, compliance, and production impact.

Note on data: The findings referenced in this article are based on internal JetBrains research conducted in January 2026 (AI Pulse) and October 2025 (State of Developer Ecosystem and State of CI/CD Tools reports). Given the pace of change in AI, these results should be interpreted as a snapshot of current trends rather than a fixed baseline.

Key insight: AI adoption is highest where the cost of mistakes is low. CI/CD operates under different constraints.

How AI is used in software development today

According to JetBrains’ AI Pulse (January 2026), AI tools are now used by a large majority of developers in their daily work, and AI is already embedded in many parts of the software development lifecycle, but its impact is uneven.

In practice, most of the value today is concentrated upstream from CI/CD pipelines, in areas where developers can iterate quickly and validate results informally.

The table below compares how AI is used in day-to-day development work versus AI in DevOps pipelines. Each row highlights one aspect of the workflow and shows how the conditions differ.

| Dimension | Upstream (IDE/dev workflow) | CI/CD pipelines |

| Feedback loop | Immediate and local, results are visible right away | Slower and system-wide, feedback comes from full pipeline runs |

| Cost of error | Low; changes can be reverted easily | Higher; failures can affect builds or releases |

| Validation | Informal and often manual | Formal and automated through tests, scans, and checks |

| Role of AI | Assists developers during coding and exploration | Assists, but outputs must pass strict validation |

| Adoption level | High; widely used in daily workflows | Limited; used cautiously in specific scenarios |

In day-to-day development work, AI is primarily used to accelerate routine tasks. Developers rely on it to generate code, refactor existing logic, and explore unfamiliar APIs. These interactions are fast and low-risk because they are easy to verify locally and can be discarded without consequences.

The same applies to debugging workflows, where AI helps interpret logs and suggest likely causes of failures. Even when suggestions are imperfect, they provide a useful starting point that reduces the time spent on manual investigation.

AI is also widely used for documentation and knowledge discovery. In large codebases, understanding existing systems often takes longer than writing new code. AI reduces this friction by summarizing components, explaining dependencies, and surfacing relevant context.

Similarly, in security workflows, AI is increasingly used to flag potential vulnerabilities and suggest fixes, particularly in earlier stages of development.

What unites these use cases is not the specific task, but the environment in which they operate. They all sit in parts of the workflow where feedback is immediate, mistakes are easy to detect, and decisions can be reversed with minimal cost.

CI/CD pipelines operate under different conditions. Changes are no longer local experiments but candidates for release. At this stage, the cost of error increases, and the system relies on consistent, reproducible validation signals.

This shift in constraints explains why AI adoption is not progressing at the same pace across the entire DevOps lifecycle. Generally speaking, AI is expanding more quickly in development workflows and more cautiously inside CI/CD pipelines.

Why AI adoption in CI/CD is still low

AI in DevOps is still at an early stage compared to development workflows.

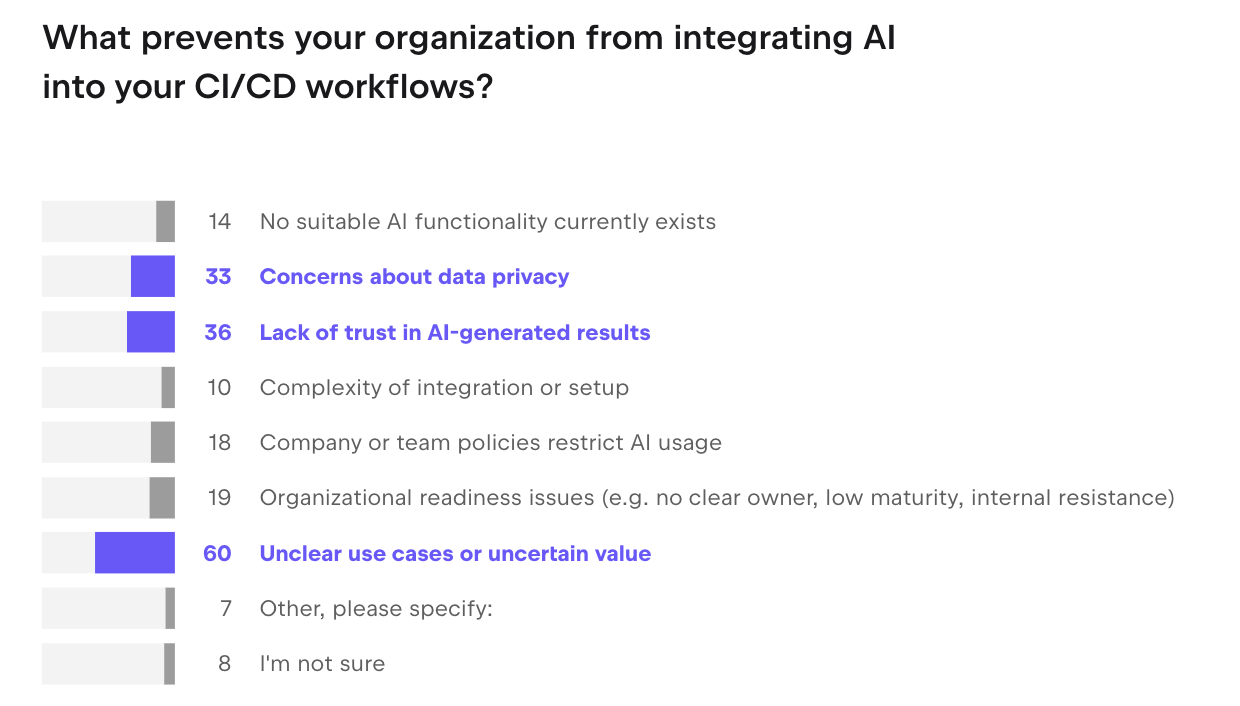

What the data shows

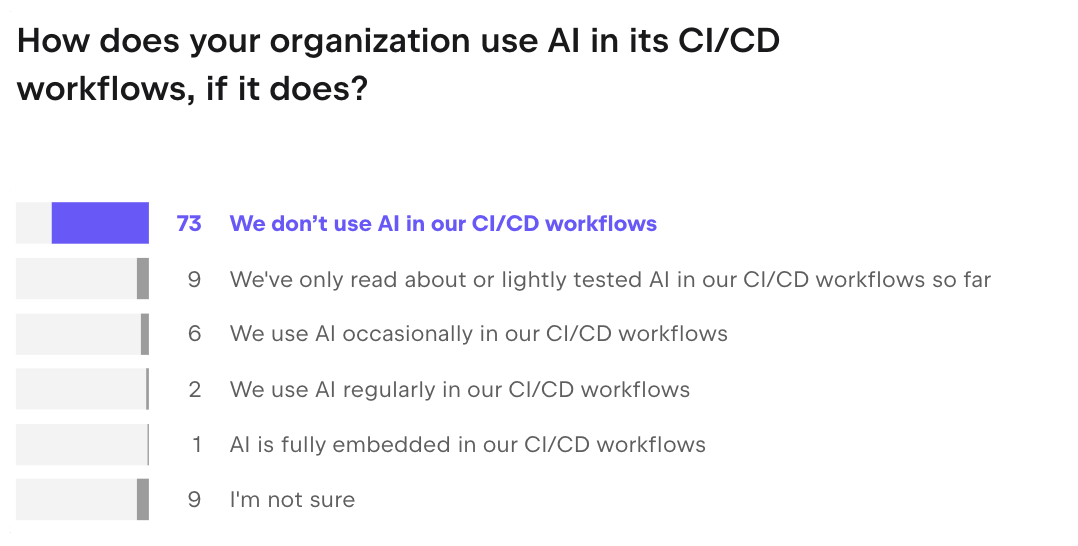

- 73% of organizations don’t use AI in CI/CD pipelines at all

- 60% cite unclear use cases or value

- 36% cite lack of trust in AI-generated results

- 33% cite data privacy concerns

These results suggest that the main challenge is not technical integration. Teams are evaluating whether AI can reliably and predictably deliver value within a system that is responsible for validation.

In practice, AI adoption inside pipelines is shaped more by trust and measurable outcomes than by model capability.

CI/CD is an evidence system, not just automation

CI/CD is often framed as automation, but that undersells what it actually does. Its real job is to give teams enough confidence to ship changes without fear.

Every step in a pipeline is designed to reduce the likelihood and impact of production failures. Builds confirm code compiles. Tests validate behavior. Deployment processes ensure changes can go out and come back cleanly.

Think of it this way: CI/CD turns code changes into signals teams can act on. Test results, logs, deployment outcomes, all these answer one question: is this safe to release?

AI complicates this. It increases both the volume and variability of changes entering the pipeline, and CI/CD is built around predictability. That results in tension.

But it’s not a compatibility problem. AI-generated changes can move through a pipeline the same as any other, provided the pipeline can validate them with enough confidence. What changes is the stakes. When code is being written faster and in larger quantities, having a system that can catch bad changes reliably matters more, not less.

Where AI actually works in CI/CD today

Current use of AI in CI/CD pipelines is focused on improving how evidence is generated and interpreted.

Failure diagnosis

AI is most effective in CI/CD when it is used to speed up failure analysis rather than to make decisions. By processing pipeline logs at scale, identifying recurring patterns, and correlating errors across runs, AI tools help engineers identify likely root causes much faster than manual inspection.

This reduces initial triage time and allows teams to focus on resolving issues, while keeping decisions firmly under human control.

In practice, this reduces the time spent on initial triage and allows teams to focus on resolving issues, while keeping the decision-making process firmly under human control.

Security fixes

In security workflows, AI is increasingly used to assist with identifying and remediating vulnerabilities discovered during pipeline execution. Rather than replacing existing scanning tools, AI builds on top of them by interpreting findings, suggesting fixes, and in some cases generating patches.

These suggestions still pass through standard CI/CD validation steps, including testing and review, which ensures that security improvements do not introduce regressions or unintended side effects.

Test optimization

Testing remains one of the most resource-intensive parts of CI/CD, and this is where AI is beginning to show measurable impact. By analyzing historical test runs and code changes, AI can prioritize which tests are most relevant for a given change, identify patterns of flaky behavior, and reduce redundant execution.

This leads to faster pipelines and more relevant feedback without sacrificing coverage entirely. Faster feedback is valuable, but it only matters if teams can still trust the results.

In practice, this means treating AI-driven test selection as an optimization layer rather than a replacement for validation. Teams often keep periodic full test runs in place and monitor for defects that might slip through reduced test sets. This approach allows them to increase speed without weakening the pipeline’s reliability.

The common thread across these use cases: AI is making validation more efficient, not replacing it.

From copilots to agents: What’s changing

The role of AI in DevOps is evolving from assistance to participation in workflows. Early tools focused on suggesting code or helping developers understand unfamiliar parts of the codebase by explaining logic, tracing dependencies, and summarizing large components.

Newer systems are beginning to generate changes, propose pull requests, and iterate based on feedback.

This shift introduces a different interaction model. Instead of developers being the only source of change, AI systems become contributors that operate within repository and pipeline workflows. They can suggest modifications, open pull requests, and respond to feedback loops created by CI/CD pipelines.

At first glance, this can create the impression that AI and CI/CD are in conflict. AI introduces more change, more variability, and less predictability, while CI/CD exists to enforce stability and control.

In practice, the opposite is true. The more AI participates in generating changes, the more teams rely on CI/CD to evaluate and constrain those changes. Pipelines become the mechanism that determines which AI-generated outputs are acceptable and which are not.

? Read also: How We Taught AI Agents to See the Bigger Picture

The implication for CI/CD is significant. Pipelines are no longer validating only human-written code. They are increasingly responsible for evaluating changes produced by automated systems. CI/CD becomes the environment where AI-generated changes are tested, constrained, and approved before they reach production.

In practical terms, this means AI in DevOps moves from being a productivity layer in the IDE to becoming part of the delivery system itself. The quality of that system determines whether agent-driven workflows can be adopted safely.

The maturity model of AI in CI/CD

AI doesn’t enter CI/CD all at once. Teams move through it gradually, expanding what they trust AI to do as the system proves itself.

Most teams are still at the starting point: AI isn’t in the pipeline at all. Developers might use it heavily in their editors, but the pipeline itself treats every change the same, regardless of how it was written.

The first real integration is usually about failure analysis. When a pipeline breaks, AI can read the logs, identify the error, and suggest what likely caused it. Engineers still make the call on what to fix and how. AI just cuts down the time spent staring at output trying to figure out where things went wrong.

From there, teams start letting AI generate outputs: suggested fixes, pull requests, configuration changes, test improvements. These still go through normal review and validation. AI is part of the workflow, but a human is still deciding what ships.

AI is part of the workflow, but a human is still deciding what ships

The furthest stage is agent-like behavior, where AI can trigger actions on its own: opening pull requests, rerunning pipelines, proposing changes without being asked.

This doesn’t mean AI acts without limits. It can only trigger actions that have been explicitly permitted, every action is logged, and anything significant still requires a human to approve it before it goes through.

What determines how far a team can go with AI in CI/CD is whether the surrounding system, the validation, the policies, the pipeline signals, is reliable enough to support it.

Most teams are still in the first two stages, and survey data backs that up: direct AI integration inside CI/CD workflows remains uncommon. According to the JetBrains AI Pulse, 78.2% of respondents don’t use AI in CI/CD workflows at all.

What matters across these stages is not the sophistication of the models, but the level of trust in the surrounding system. Each step forward requires stronger validation, clearer policies, and more reliable signals from the pipeline.

Most teams today remain in the first two stages, where AI is used to support understanding rather than to execute changes. This aligns with broader survey data showing limited adoption of AI directly inside CI/CD workflows.

To summarize this progression, the stages can be viewed side by side:

| Stage | What changes in practice | Level of control |

| No AI | Pipelines treat all changes equally | Fully human-driven |

| AI-assisted understanding | AI explains failures and logs | Human decisions |

| AI-assisted proposals | AI suggests fixes, PRs, and test changes | Human review required |

| Agentic workflows | AI can trigger actions within pipelines | Governed and constrained |

This framing highlights that adoption is not about adding AI features, but about increasing the level of trust a team is willing to place in automated decisions.

What this means for CI/CD systems

As AI becomes more embedded in development workflows, the role of CI/CD systems shifts from automation to control and validation.

Three areas become critical:

First, the reliability and clarity of pipeline results start to limit how effectively teams can use AI. AI increases the volume of changes entering the system, which puts pressure on testing, build stability, and signal reliability. Flaky tests, inconsistent builds, and unclear feedback loops become more visible and more costly.

Second, pipelines need clear controls over how changes progress. As AI systems begin to propose or trigger changes, teams rely on approvals, policy checks, access controls, and audit trails to govern them. These controls already exist in CI/CD, but they become more central as automation increases.

Third, integration with external systems becomes more important. CI/CD platforms are increasingly expected to expose pipelines, logs, and workflows in a way that other tools, including AI systems, can interact with. This shifts CI/CD from a closed automation system to a component in a broader toolchain.

Conclusion: AI in DevOps needs a trust layer

AI is accelerating software development workflows. CI/CD systems continue to focus on validation and release readiness. These two facts are not in conflict, but they do create pressure.

As AI-generated changes become more common, the role of CI/CD becomes more critical in ensuring those changes meet the standards required for production. Teams will increasingly evaluate their delivery systems based on their ability to handle higher change volume while maintaining reliability.

But the questions the industry has not fully answered yet are the following: As AI agents become more capable and more autonomous, how do you build governance mechanisms that scale with them? What does meaningful human oversight look like when a pipeline is processing hundreds of AI-generated changes per day? And at what point does the evidence that CI/CD produces need to be audited by AI itself?

These are not hypothetical. They are the questions that will define how CI/CD evolves over the next few years.