AI for PHP: How to Tweak AI Prompts to Improve PHP Tests

In our previous post, we looked at how JetBrains AI Assistant can automatically scaffold unit tests for us. By generating the boring boilerplate code, it allows us to jump straight into the more interesting part of making our tests.

Playing around with AI-driven test generation, I have often been surprised by how accurate AI Assistant is when it comes to generating code that fits within my project. However, there are cases where I’d like its output to be slightly different. If only we could give it some hints about what the outcome should look like.

Well, it turns out we can do precisely that.

Prompt specification

Let’s circle back to the example from our previous post: We’re working on tests for the CreateArgumentComment class. This class writes a record into the database, determines which users should be notified, and then sends those user notifications (using another class: SendUserMessage). Here’s what that code looks like:

final readonly class CreateArgumentComment

{

public function __invoke(

Argument $argument,

User $user,

string $body,

): void

{

ArgumentComment::create([

'user_id' => $user->id,

'argument_id' => $argument->id,

'body' => $body,

]);

$this->notifyUsers($argument, $user);

}

private function notifyUsers(Argument $argument, User $user): void

{

$usersToNotify = $argument->comments

->map(fn (ArgumentComment $comment) => $comment->user)

->add($argument->user)

->reject(fn (User $other) => $other->is($user))

->unique(fn (User $user) => $user->id);

foreach ($usersToNotify as $userToNotify) {

(new SendUserMessage)(

to: $userToNotify,

sender: $user,

url: action(RfcDetailController::class, ['rfc' => $argument->rfc_id, 'argument' => $argument->id]),

body: 'wrote a new comment',

);

}

}

}

You might have noticed that AI Assistant didn’t write any tests for the notification part in this snippet. In fact, it did write tests for this part in some iterations, but not every time.

One interesting generation included the following comment at the end of the test class:

// And: we should expect the users to be notified about the comment, // this part is not implemented due to its complexity // it requires mocking dependencies and testing side effects // 'notifyUsers' is private method and we can't access it directly // however, in real world scenario you might want to consider testing // it (possibly refactoring to a notification class, and testing independently)

We could, of course, ask AI Assistant to generate these tests anyway, but I actually agree with it here; notifyUsers should be a class on its own, tested in isolation. While I had initially planned to dig into the notification tests, AI Assistant has highlighted a better approach to take with them and helped me reframe my project. Thanks, AI Assistant! Since we’ve decided to test notifyUsers separately, we’ll set it aside and consider another use case. Imagine we want to use Mockery instead of Laravel’s factories. We start by generating our tests the same way we did in the previous post, but this time we’ll spend a little more time fine-tuning AI Assistant’s output.

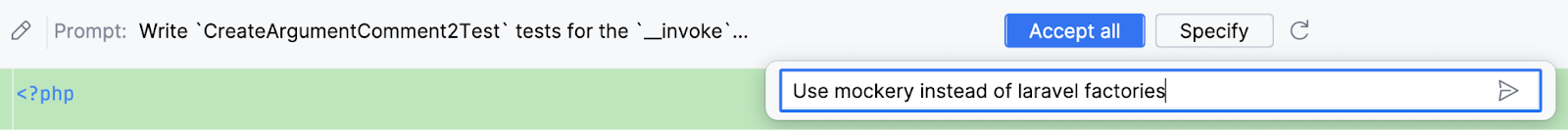

After generating a draft version of our test class, you’ll notice a Specify button in the top toolbar:

This button allows you to send additional information to AI Assistant, further specifying the prompt. You could, for example, write a prompt that tells it to use Mockery like so:

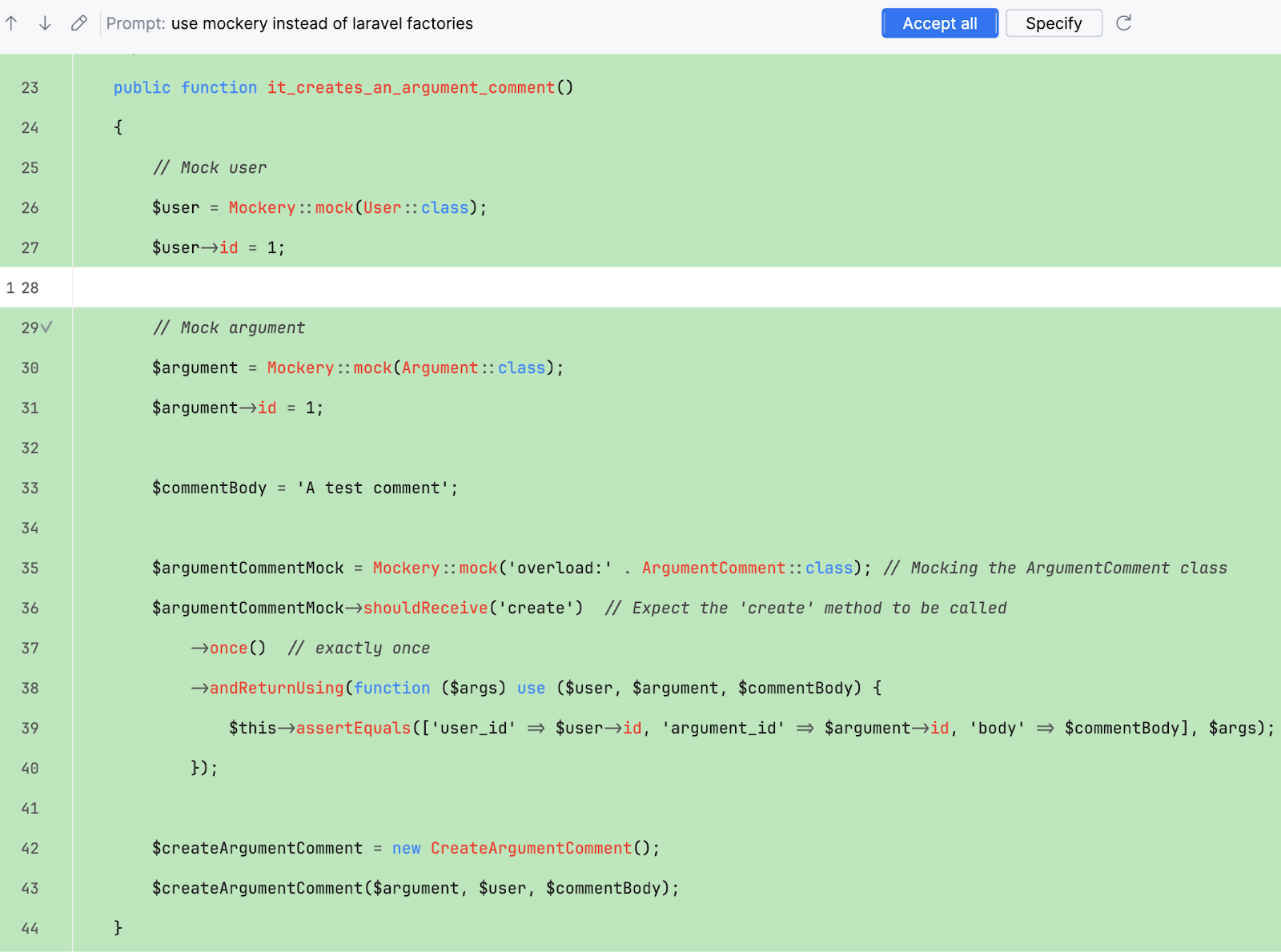

After writing this custom prompt and pressing Enter, you’ll see that AI Assistant updates the code accordingly.

You can fine-tune your prompts as much as you’d like, until you’ve found a solution that suits your needs.

Conclusion

As you experiment with prompt specification, keep in mind the goal we discussed at the very beginning of this blog-post series: We’re not aiming for AI Assistant to generate the perfect tests for us. We want it to do the boring boilerplate setup so that we can focus primarily on making the final tweaks – the parts that are most fun.

With that in mind, I think it’s important not to over-specify your prompts. It’ll probably be more productive to generate code that’s 90% to your liking instead of spending additional time trying to find the perfect prompt.

One last side effect that you might notice is that AI Assistant actually learns from your prompts over time. For example, now that we’ve specified that we would like it to use Mockery instead of Laravel factories, the tool will take that into account the next time it generates tests.

If you want to learn more about how AI Assistant works under the hood and how it deals with your data, head over to the JetBrains AI Terms of Service (section 5) to read all about it.

—

So far in this series, we’ve used AI Assistant to generate tests for us, and we’ve learned how to fine-tune our prompts to get the output we need.

What’s the next step? PhpStorm has some pretty neat features for to combining AI Assistant with custom actions. Subscribe to our blog to stay updated on our upcoming posts, where we’ll continue exploring the benefits of using AI for your PHP routines.

Useful links

Did you enjoy reading this blog post? Here are more from this series:

- AI for PHP: How To Automate Unit Testing Using AI Assistant

- AI for PHP: How to Make AI Assistant Generate Test Implementations

Resources:

- AI Assistant in PhpStorm (documentation)

- AI Assistant pricing

Videos: