Remote Development on Raspberry Pi: Checking Internet Quality (Part 1)

We all know that ISPs have a habit of overselling their connections, and this sometimes leads our connections to not be as good as we’d like them to be. Also, many of us have Raspberry Pi’s laying around waiting for cool projects. So let’s put that Pi to use to check on our internet connection!

One of the key metrics of an internet connection is its ping time to other hosts on the internet. So let’s write a program that regularly pings another host and records the results. Some of you may have heard of smokeping, which does exactly that, but that’s written in Perl and a pain to configure. So let’s go and reinvent the wheel, because we can.

PS: For those of you wanting to execute code remotely on other remote computers, like an AWS instance or a DigitalOcean droplet, the process is exactly the same.

Raspberry Ping

The app we will build consists of two parts: one part does the measurements, and the other visualizes previous measurements. To measure the results we can just call the ping command-line tool that every Linux machine (including the Raspberry Pi) ships with. We will then store the results in a PostgreSQL database.

In the part 2 of this blog post (coming next week) we’ll have a look at our results: we will view them using a Flask app, which uses Matplotlib to draw a graph of recent results.

Preparing the Pi

As we will want to be able to view the webpage with the results later, it’s important to give our Pi a fixed IP within our network. To do so, edit /etc/network/interfaces. See this tutorial for additional details. NOTE: don’t do this if you’re on a company network, your network administrator will cut your hands off with a rusty knife, don’t ask me how I know or how I’m typing this.

After you’ve set the Pi to use a static IP, use raspi-config on the command line. Go to Advanced Options, choose SSH, and choose Yes. When you’ve done this, you’re ready to get started with PyCharm.

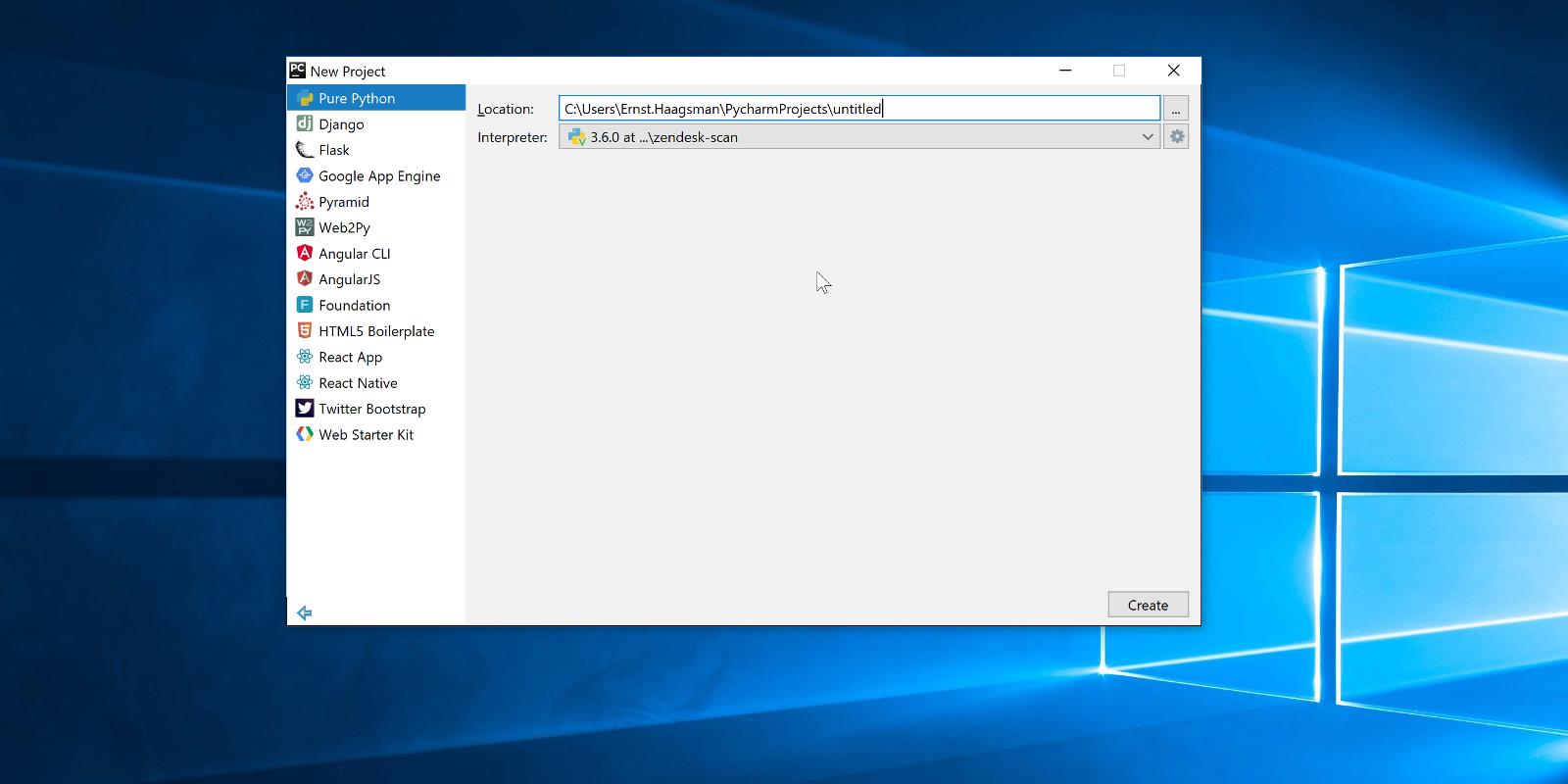

Let’s connect PyCharm to the Raspberry Pi. Go to File | Create New Project, and choose Pure Python (we’ll add Flask later, so you could choose Flask here as well if you’d prefer). Then use the gear icon to add an SSH remote interpreter. Use the credentials that you’ve set up for your Raspberry Pi. I’m going to use the system interpreter. If you’d like to use a virtualenv instead, you can browse to the python executable within your virtualenv as well.

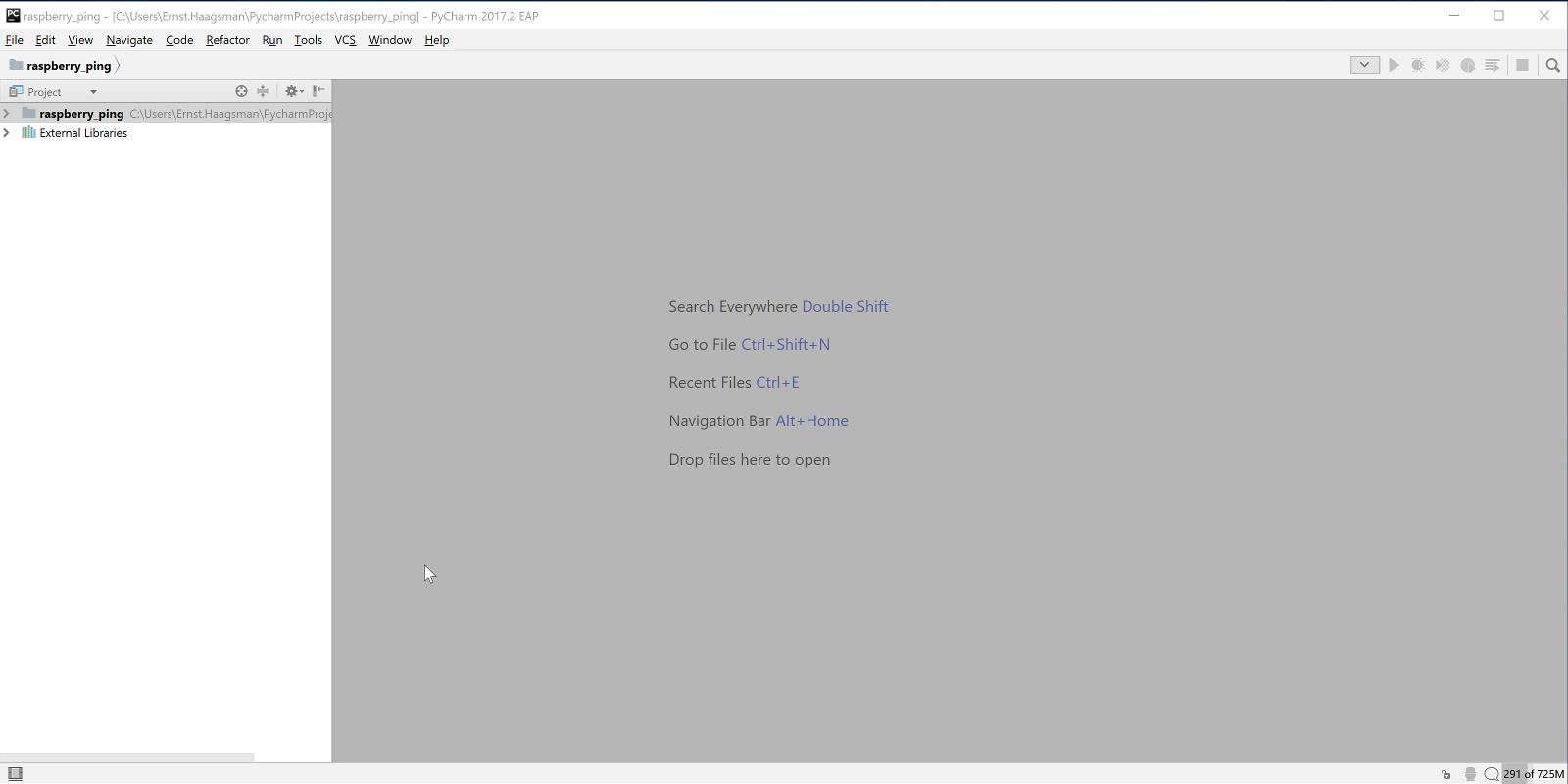

After you’ve created the project, there are a couple things we need to take care of before we can start coding. So let’s open an SSH terminal to do these things. Within PyCharm press Ctrl+Shift+A, then type and choose ‘Start SSH session’, then pick your Raspberry Pi from the list, and you should be connected.

We now need to install several items:

- PostgreSQL

- Libpq-dev, needed for Psycopg2

- Python-dev, needed to compile Psycopg2

Run sudo apt-get update && sudo apt-get install -y postgresql libpq-dev python-dev to install everything at once.

After installing the prerequisites, we now need to set up the permissions in PostgreSQL. The easiest way to do this is to go back to our SSH terminal, and run sudo -u postgres psql to get an SQL prompt as the postgres user. Now we’ll create a user (called a ‘role’ in Postgres terminology) with the same name as the user that we run the process with:

CREATE ROLE pi WITH LOGIN PASSWORD ‘hunter2’;

Make sure that the role in PostgreSQL has the same name as your linux username. You might also want to substitute a better password. It is important to end your SQL statements with a semicolon (;) in psql, because it will assume you’re writing a multi-line statement until you terminate with a semicolon. We’re granting the pi user login rights, which just means that the user can log in. Roles without login rights are used to create groups.

We also need to create a database. Let’s create a database named after the user (this makes running psql as pi very easy):

CREATE DATABASE pi WITH OWNER pi;

Now exit psql with \q.

Capturing the Pings

To get information on the quality of the internet connection, let’s ping a server using the system’s ping utility, and then read the result with a regex. So let’s take a look at the output of ping:

PING jetbrains.com (54.217.236.18) 56(84) bytes of data. 64 bytes from ec2-54-217-236-18.eu-west-1.compute.amazonaws.com (54.217.236.18): icmp_seq=1 ttl=47 time=32.9 ms 64 bytes from ec2-54-217-236-18.eu-west-1.compute.amazonaws.com (54.217.236.18): icmp_seq=2 ttl=47 time=32.9 ms 64 bytes from ec2-54-217-236-18.eu-west-1.compute.amazonaws.com (54.217.236.18): icmp_seq=3 ttl=47 time=32.9 ms 64 bytes from ec2-54-217-236-18.eu-west-1.compute.amazonaws.com (54.217.236.18): icmp_seq=4 ttl=47 time=32.9 ms --- jetbrains.com ping statistics --- 4 packets transmitted, 4 received, 0% packet loss, time 3003ms rtt min/avg/max/mdev = 32.909/32.951/32.992/0.131 ms

All lines with individual round trip times begin with ‘64 bytes from’. Let’s create a file ‘ping.py’, and start coding: we can first get the output of ping, and then iterate over the lines, picking the one that start with a number and ‘bytes from’.

host = 'jetbrains.com'

ping_output = subprocess32.check_output(["ping", host, "-c 5"])

for line in ping_output.split('\n'):

if re.match("\d+ bytes from", line):

print(line)

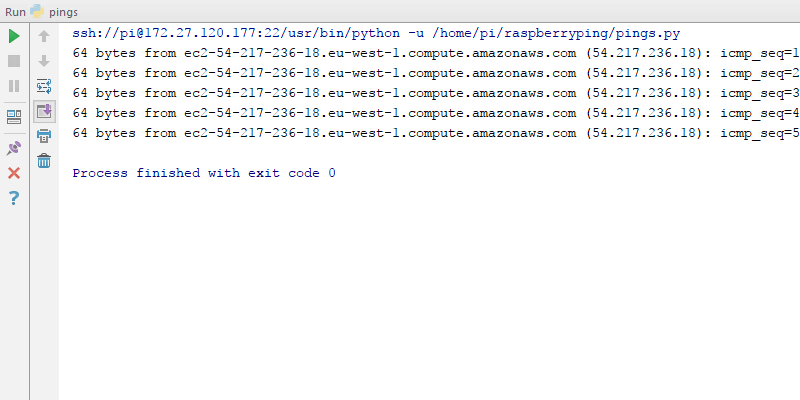

At this point if you run the code (Ctrl+Shift+F10), you should see this code running, remotely on the Raspberry Pi:

If you get a file not found problem, you may want to check if the deployment settings are set up correctly: Tools | Deployment | Automatic Upload should be checked.

Storing the Pings

We wanted to store our pings in PostgreSQL, so let’s create a table for them. First, we need to connect to the database. As our database is only exposed to localhost, we will need to use an SSH tunnel:

After you’ve connected, create the table by executing the setup_db.sql script. To do this, copy paste from GitHub into the SQL console that opened up right after connecting, and then use the green play button.

Now that we’ve got this working, let’s expand our script to record the pings into the database. To connect to the database from Python we’ll need to install psycopg2, you can do this by going to File | Settings | Project Interpreter, and using the green ‘+’ icon to install the package. If you’d like to see the full script, you can have a look on GitHub.

Cron

To make sure that we actually regularly record the pings, we need to schedule this script to be run. For this we will use cron. As we’re using peer authentication to the database, we need to make sure that the script is run as the pi user. So let’s open an SSH session (making sure we’re logged in as pi), and then run crontab -e to edit our user crontab. Then at the bottom of the file add:

*/5 * * * * /home/pi/raspberryping/ping.py jetbrains.com >> /var/log/raspberryping.log 2>&1

Make sure you have a newline at the end of the file.

The first */5 means that the script will be run every 5 minutes, if you’d like a different frequency you can learn more about crontabs on Wikipedia. Now we also need to create the log file and make sure that the script can write to it:

sudo touch /var/log/raspberryping.log sudo chown pi:pi /var/log/raspberryping.log

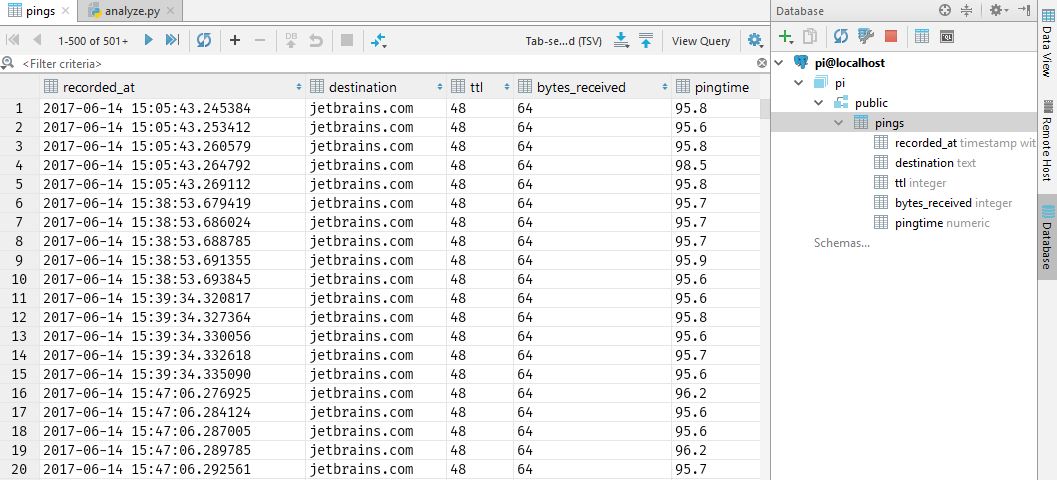

At this point you may want to grab a cup of coffee, and when you come back a while later some ping times should have been logged. To verify, let’s check with PyCharm’s database tools. Open the database tool window (on the right of the screen) and double click the table. You should see that it contains values:

Read the second part of this blog post to learn how to analyze the ping times, and graph them with Matplotlib.