Upsource

Code Review and Project Analytics

What to look for in a Code Review: Performance

This is part 3 of 6 posts on what to look for in a code review. See other posts from the series.

In part three of our series on code reviews, we’re going to cover what you can look for in terms of the performance of the code under review.

As with all architecture/design areas, the non-functional requirements for the performance of a system should have been set up-front. Whether you’re working on a low-latency trading system which has to respond in nano-seconds, you’re creating an online shopping site which needs to be responsive to the user, or you’re writing a phone app to manage a “To Do” list, you should have some idea about what’s considered “too slow”.

Let’s cover some of the things that affect performance that a reviewer can look for during a code review.

Firstly

Did this piece of functionality have hard performance requirements?

Does the piece of code under review fall under an area that had a previously published SLA? Or do the requirements state the performance characteristics required?

If the original requirements were a bug along the lines of “the login screen is too slow to load”, the original developer should have clarified what would be a suitable loading time – otherwise how can the reviewer or the author be confident that the speed has been sufficiently improved?

If so, is there a test that proves it meets those?

Any performance critical system should have automated performance tests which ensure that published SLAs (“all order requests serviced in less than 10ms”) are met. Without these, you’re relying on your users to inform you of failures to meet your SLAs. Not only is this a poor user experience, but could lead to avoidable fines and fees. The last post in this series covered code-reviewing tests in detail.

Has the fix/new functionality negatively impacted the results of any existing performance tests?

If you are regularly running performance tests (or if you have a suite that can be run on demand), check that new code in performance-critical areas hasn’t introduced a decrease in system performance. This might be an automated process, but since running performance tests as part of a CI environment is much less common than running unit tests, it is worth specifically mentioning it as a possible step during review.

If there are no hard performance requirements for this code review?

There’s limited value spending hours agonising over optimisations that will save you a few CPU cycles. But there are things a reviewer can check for in order to ensure that the code doesn’t suffer from common performance pitfalls that are completely avoidable. Check out the rest of the list to see if there are easy wins to prevent future performance problems.

Calls outside of the service/application are expensive

Any use of systems outside your own application that require a network hop are generally going to cost you much more than a poorly optimised equals() method. Consider:

Calls to the database

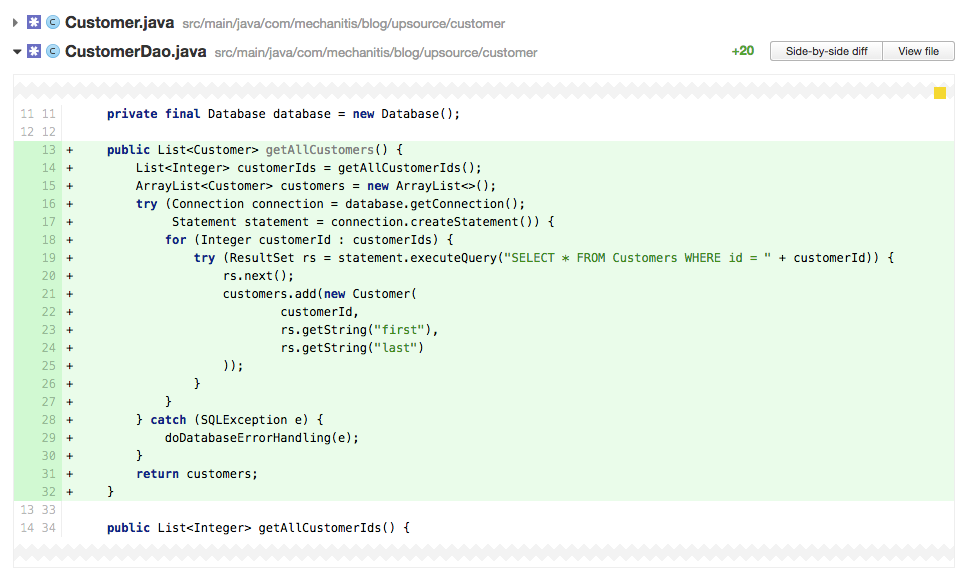

The worst offenders might be hiding behind abstractions like ORMs. But in a code review you should be able to catch common causes of performance problems, like individual calls to a database inside a loop – for example, loading a list of IDs, then querying the database for each individual item that corresponds to that ID.

For example, line 19 above might look fairly innocent, but it’s inside a for loop – you have no idea how many calls to the database this code might result in.

Unnecessary network calls

Like databases, remote services can sometimes be over-used, with multiple remote calls being made where a single one might suffice, or where batching or caching might prevent expensive network calls. Again, like databases, sometimes an abstraction can hide that a method call is actually calling a remote API.

Mobile / wearable apps calling the back end too much

This is basically the same as “unnecessary network calls”, but with the added problem that on mobile devices, not only will unnecessary calls to the back-end cost you performance, it will also cost you battery life.

Using resources efficiently/effectively

Following on from how we use our network resources, a reviewer can look at the use of other resources to identify possible performance problems.

Does the code use locks to access shared resources? Could this result in poor performance or deadlocks?

Locks are a performance killer and very hard to reason about in a multi-threaded environment. Consider patterns like; having only a single thread that writes/changes values while all other threads are free to read; or using lock free algorithms.

Is there something in the code which could lead to a memory leak?

In Java, some common causes can be: mutable static fields, using ThreadLocal and using a ClassLoader.

Is there a possibility the memory footprint of the application could grow infinitely?

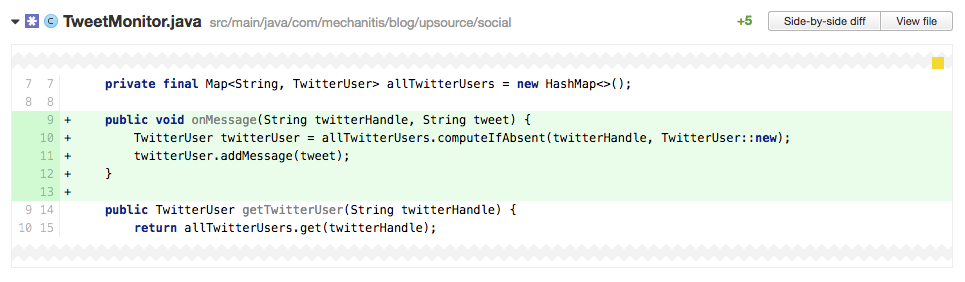

This is not the same as a memory leak – a memory leak is where unused objects cannot be collected by the garbage collector. But any language, even non-garbage-collected ones, can create data structures that grow indefinitely. If, as a reviewer, you see new values constantly being added to a list or map, question if and when the list or map is discarded or trimmed.

In the code review above, we can see all messages from Twitter being added to a map. If we examine the class more fully, we see that the allTwitterUsers map is never trimmed, nor is the list of tweets in a TwitterUser. Depending upon how many users we’re monitoring and how often we add tweets, this map could get very big, very fast.

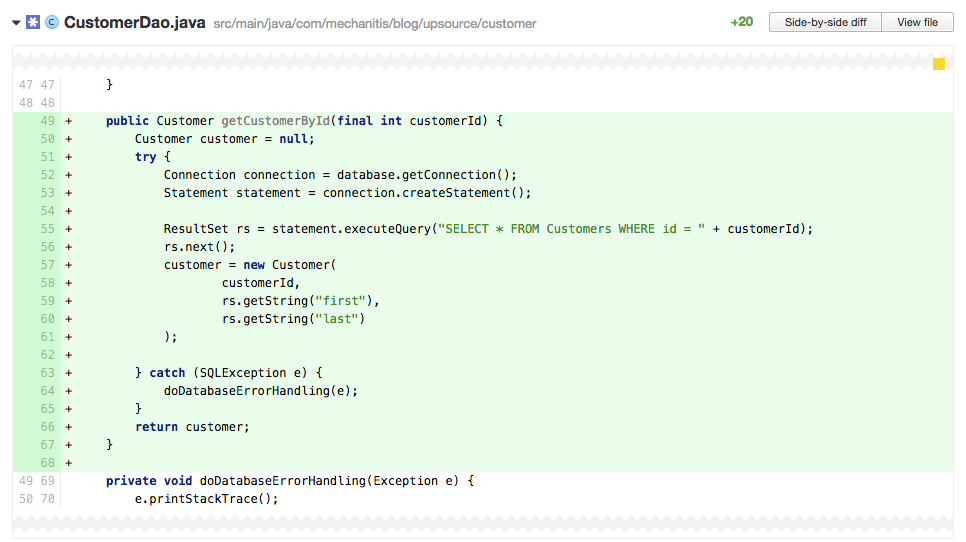

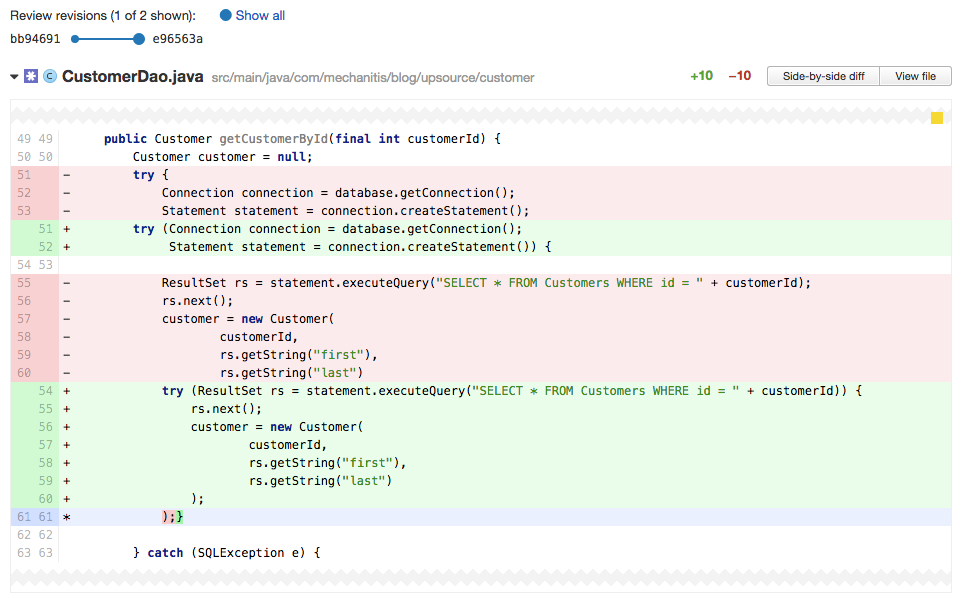

Does the code close connections/streams?

It’s easy to forget to close connections or file/network streams. When you’re reviewing someone else’s code, whichever language it happens to be in, if a file, network or database connection is in use, make sure it is correctly closed.

It’s very easy for the original code author to miss this problem, as the above code will compile happily. As the reviewer, you should spot that the connection, statement and result set all need closing before the method exits. In Java 7, this has become much easier to manage thanks to try-with-resources. The screenshot below shows the result of a code review where the author has changed the code to use try-with-resources.

Are resource pools correctly configured?

The optimal configuration for an environment is going to depend on a number of factors, so it’s unlikely that as a reviewer you’ll know immediately if, for example, a database connection pool is correctly sized. But there are a few things you can tell at a glance, for example is the pool too small (e.g. sized at one) or too big (millions of threads). Fine tuning these values requires testing in an environment as close to the real environment as possible, but a common problem that can be identified at code review is when a pool (thread pool or connection pool, for example) is really far too large. Logic dictates that larger is better, but of course each of these objects takes up resources, and the overhead of managing thousands of them is usually much higher than the benefits of having many of them available. If in doubt, the defaults are usually a good start. Code that deviates from default settings should prove the value with some sort of test or calculation.

Warning signs a reviewer can easily spot

Some types of code suggests immediately a potential performance problem. This will depend upon the language and libraries used (please let us know in the comments of “code smells” in your environment).

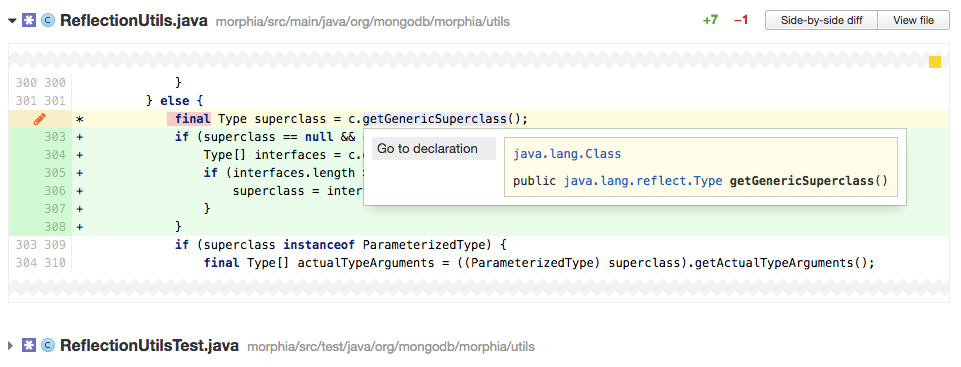

Reflection

Reflection in Java is slower than doing things without reflection. If you’re reviewing code that contains reflection, question whether this is absolutely required.

The screenshot above shows a reviewer clicking on a method in Upsource to check where it comes from, and you can see that this method is returning something from the java.lang.reflect package, which should be a warning sign.

Timeouts

When you’re reviewing code, you might not know what the correct timeout for an operation is, but you should be thinking “what’s the impact on the rest of the system while this timeout is ticking down?”. As the reviewer you should consider the worst case – is the application blocking while a 5 minute timeout is ticking down? What’s the worst that would happen if this was set to one second? If the author of the code can’t justify the length of a timeout, and you, the reviewer, don’t know the pros and cons of a selected value, then it’s a good time to get someone involved who does understand the implications. Don’t wait for your users to tell you about performance problems.

Parallelism

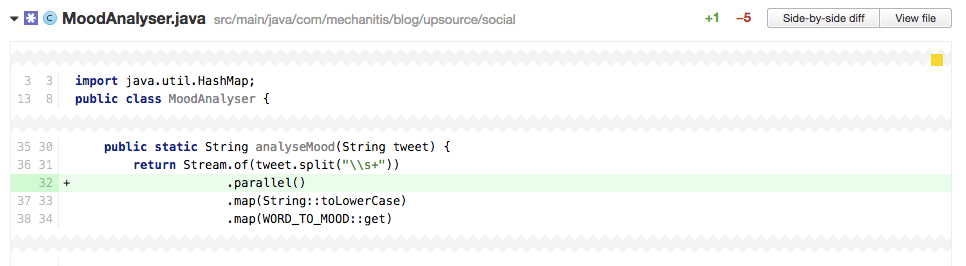

Does the code use multiple threads to perform a simple operation? Does this add more time and complexity rather than improving performance? With modern Java, this might be more subtle than creating new threads explicitly: does the code use Java 8’s shiny new parallel streams but not benefit from the parallelism? For example, using a parallel stream on a small number of elements, or on a stream which does very simple operations, might be slower than performing the operations on a sequential stream.

In the code above, the added use of parallel is unlikely to give us anything – the stream is acting upon a Tweet, therefore a string no longer than 140 characters. Parallelising an operation that’s going to work on so few words will probably not give a performance improvement, and the cost of splitting this up over parallel threads will almost certainly be higher than any gain.

Correctness

These things are not necessarily going to impact the performance of your system, but since they’re largely related to running in a multi-threaded environment, they are related to the topic.

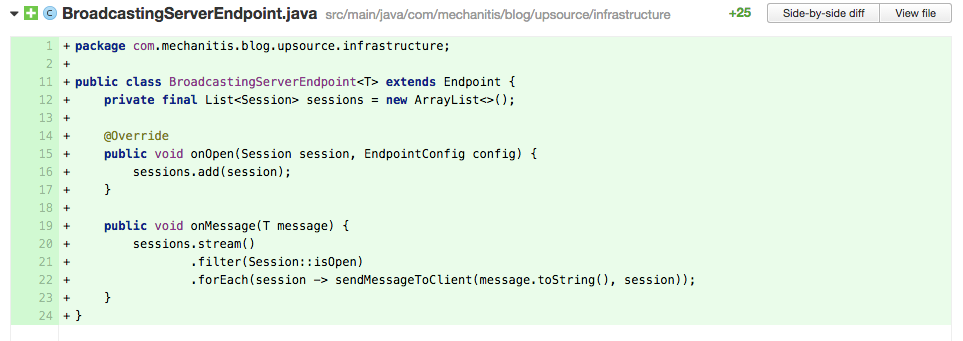

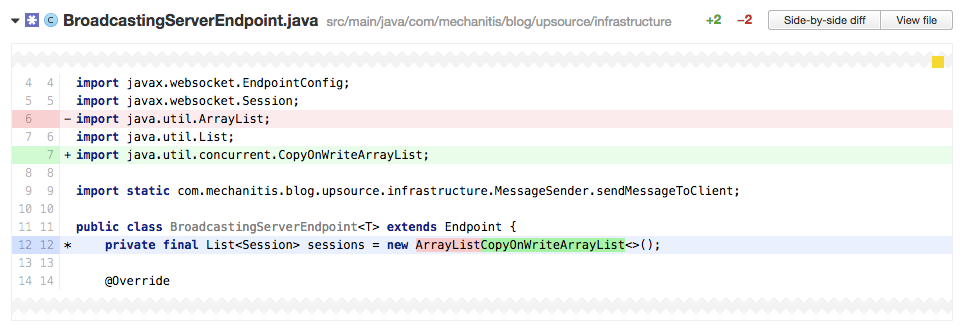

Is the code using the correct data structure for a multi-threaded environment?

In the code above, the author is using an ArrayList on line 12 to store all the sessions. However, this code, specifically the onOpen method, is called by multiple threads, so the sessions field needs to be a thread safe data structure. For this case, we have a number of options: we could use a Vector, we could use Collections.synchronizedList() to create a thread safe List, but probably the best selection for this case is to use CopyOnWriteArrayList, since the list will change far less often than it will be read.

Is the code susceptible to race conditions?

It’s surprisingly easy to write code that can cause subtle race conditions when used in a multi-threaded environment. For example:

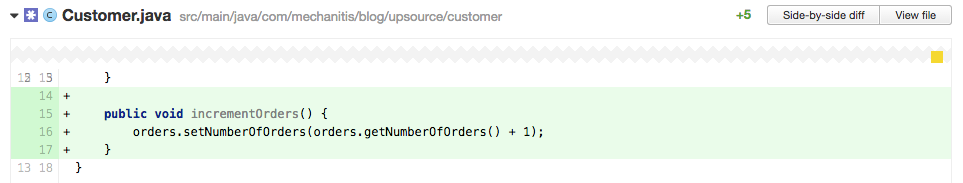

Although the increment code is on a single line (line 16), it’s possible for another thread to increment the orders between this code getting it and this code setting the new value. As a reviewer, look out for get and set combos that are not atomic.

Is the code using locks correctly?

Related to race conditions, as a reviewer you should be checking that the code being reviewed is not allowing multiple threads to modify values in a way that could clash. The code might need synchronization, locks, or atomic variables to control changes to blocks of code.

Is the performance test for the code valuable?

It’s easy to write poor microbenchmarks, for example. Or if the test uses data that’s not at all like production data, it might be giving misleading results.

Caching

While caching might be a way to prevent making too many external requests, it comes with its own challenges. If the code under review uses caching, you should look for some common problems, for example, incorrect invalidation of cache items.

Code-level optimisations

If you’re reviewing code, and you’re a developer, this following section may have optimisations you’d love to suggest. As a team, you need to know up-front just how important performance is to you, and whether these sorts of optimisations are beneficial to your code.

For most organisations that aren’t building a low-latency application, these optimisations are probably fall under the category of premature optimisations.

- Does the code use synchronization/locks when they’re not required? If the code is always run on a single thread, locks are unnecessary overhead.

- Is the code using a thread-safe data structure where it’s not required? For example, can

Vectorbe replaced withArrayList? - Is the code using a data structure with poor performance for the common operations? For example, using a linked list but needing to regularly search for a single item in it.

- Is the code using locks or synchronization when it could use atomic variables instead?

- Could the code benefit from lazy loading?

- Can

ifstatements or other logic be short-circuited by placing the fastest evaluation first? - Is there a lot of string formatting? Could this be more efficient?

- Are the logging statements using string formatting? Are they either protected by an

ifto check logging level, or using a supplier which is evaluated lazily?

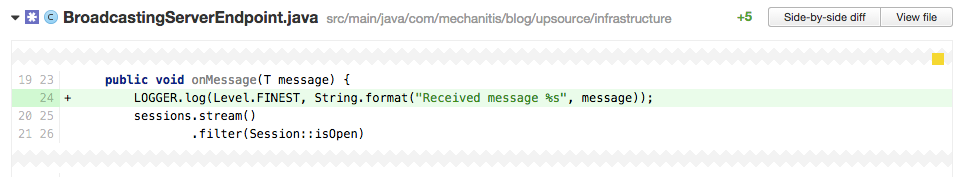

The code above only logs the message when the logger is set to FINEST. However, the expensive string format will happen every time, regardless of whether the message is actually logged.

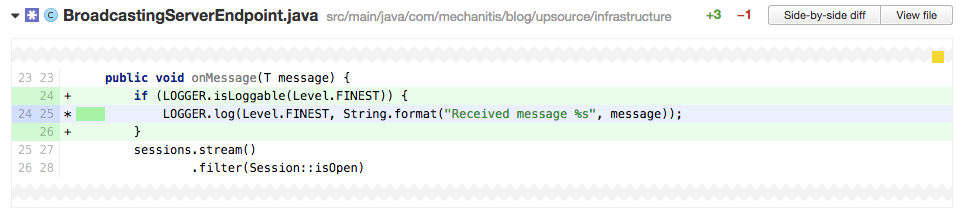

Performance can be improved by ensuring this code is only run when the log level is set to a value where the message will be written to the log, like in the code above.

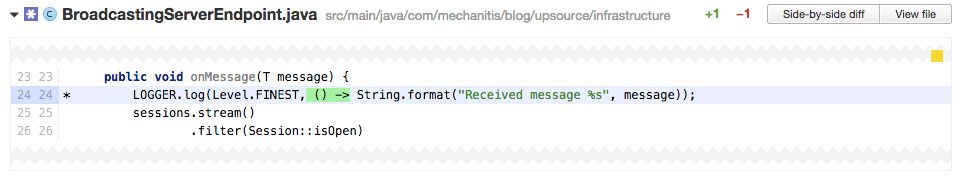

In Java 8, these performance gains can be obtained without the boilerplate if, by using a lambda expression. Because of the way the lambda is used, the string format will not be done unless the message is actually logged. This should be the preferred approach in Java 8.

That’s a lot of things to worry about when it comes to performance….

As with my original list of things to look for in code review, this article highlights some areas that your team might want to consistently check for during reviews. This will depend upon the performance requirements of your project.

Although this article is aimed at all developers, many of the examples are Java / JVM specific. I’d like to finish with some easy things for reviewers of Java code to look for, that will give the the JVM a good chance of optimising your code so that you don’t have to:

- Write small methods and classes

- Keep the logic simple – no deeply nested

ifsor loops

The more readable the code is to a human, the more chance the JIT compiler has of understanding your code enough to optimise it. This should be easy to spot during code review – if the code looks understandable and clean, it also has a good chance of performing well.

In Summary

When it comes to performance, understand that there are some areas that you may be able to get quick wins in (for example, unnecessary calls to a database) that can be identified during code review, and some areas that will be tempting to comment on (like the code-level optimisations) that might not gain enough value for the needs of your system.