Scientific Research Initiatives by JetBrains

You may have heard that at JetBrains, we “develop with pleasure” and have the “drive to develop”, but what you may not have heard is that our interests are not limited to just development and the creation of powerful, productivity-enhancing tools. We very much believe in improving on what we can and leaving a better future for those who follow in our wake. One of the ways we do this is by investing heavily in ongoing scientific research in cutting-edge innovation and education. Our research efforts see us collaborating with some of the top scientific institutions across the world to support applied research that impacts people’s lives and drives us all forward.

These scientific research efforts are united under the JetBrains Research initiative.

We would like to introduce to you the groups in JetBrains Research and describe the scope of their work.

JetBrains Research includes over 150 researchers working on projects across 19 separate lab groups. The lab groups are each working on diverse topics ranging from particle physics to software engineering.

Most scientific output nowadays comes in the form of research papers, which are an essential part of a researcher’s profile in the academic realm, and are necessary to compete for positions and win grants. The benefit of JetBrains Research is that there are no strict demands to produce papers and publications; researchers can instead focus their efforts on the essence of their work, and not on applying for grants.

Research Groups

- BioLabs

- Bioinformatics Group

- Neurodevelopment and Neurophysiology Lab

- Machine Learning Applications and Deep Learning Lab

- Agent Systems and Reinforcement Learning Lab

- Paper-Analyzer Group

- Cryptographic Lab

- HoTT and Dependent Types Group

- Nuclear Physics Methods Laboratory

- Learning Research Lab

- Mobile Robot Algorithms Laboratory

- Optimization Problems in Software Engineering

- Parameterized Algorithms Group

- Concurrent Computing Lab

- Cyber-Physical Systems Lab

- Machine Learning Methods in Software Engineering

- Programming Languages and Tools Lab

- Verification or Program Analysis Lab aka VorPAL

- Intelligent Collaboration Tools Lab

BioLabs

There is still so much we don’t know about our own inner workings: the factors that lead to genetic mutations, what indicators we need to look for that will predict future health problems, full genome sequencing, and many others. Biology as a science has come a long way, but there is still a long way to go.

The goal of BioLabs is to uncover the mechanisms underlying epigenetic regulation in humans and animals, and to identify the role these mechanisms play in cell differentiation and aging. The largest project BioLabs is involved in is the Aging Project, in collaboration with Washington University in St. Louis, MO. The BioLabs group’s other research projects cover topics such as novel data analysis algorithms, effective Next Generation Sequencing data processing tools, scalable computational pipelines, visualization approaches, and meta-analysis of existing epigenomic databases. BioLabs is also responsible for PubTrends, a new scientific publications analysis service that provides faster analysis of trends and discovery of breakthrough papers. This is essential as the number of papers published each year is growing steadily, making it infeasible for a single person to be aware of all the publications in their field of interest.

Back to the list of Research Groups

Bioinformatics Group

The field of biology is almost unfathomably large, with many different areas still waiting to be discovered and researched. The more we can understand about biology, the better prepared we are for the future and what it may hold.

The Bioinformatics Group is dedicated to the development of efficient computational methods for important problems in biology and medicine. The group is based at the Computer Technologies Department of ITMO University. The group actively collaborates with Maxim Artyomov’s laboratory at Washington University in St. Louis. Their projects cover a diverse range of exciting topics from analyzing metagenomic sequencing data to gene expression analysis and metabolomics. The group applies their extensive expertise in algorithms and computer science to biology-related tasks by reducing the biological problems to known Computer Science problems, and by building data visualization and analysis tools for biologists.

Back to the list of Research Groups

Neurodevelopment and Neurophysiology Lab

Neurodevelopment and neurophysiology have come a long way with extensive research being carried out in this area, but there are many aspects to the science that are still unknown. This field of science holds great potential for our understanding of the mind.

The Neurodevelopment and Neurophysiology Lab is working towards the goal of developing a computational framework for building dynamic spatial models of neural tissue organization and basic stimulus dynamics. The Biological Cellular Neural Network Modeling (BCNNM) project uses sequences of biochemical reactions to run complex neural network models on the formation of initial stem cells. The framework can be used for thorough in silico replication of in vitro experiments to obtain sets of measurements from key components, as well as for preliminary computational testing of novel hypotheses.

Back to the list of Research Groups

Machine Learning Applications and Deep Learning Lab

and

Agent Systems and Reinforcement Learning Lab

The potential for applying machine learning to future endeavors is immense. Machine learning can be used to make systems that are able to forecast and predict events and accurately recognize patterns in ways that would otherwise not be possible. The potential for this to be applied to real-life problems is almost infinite.

Both these labs aim to advance research in the areas of machine learning, data analysis, deep learning, and reinforcement learning, and to apply current state-of-the-art machine learning techniques to various real-world problems. This year the lab started working on applying deep learning methods in the area of drug development in a joint effort with the BIOCAD research center. Also, together with the University of Uppsala, they began studying the influence of environmental factors on gene expression. The labs are actively working with students from leading universities and are involved in developing courses to improve the level of education in the area of machine learning and data analysis.

Back to the list of Research Groups

Paper-Analyzer Group

Working at the forefront of science, it is important to keep up with all the new discoveries being made and the latest scientific theories and hypotheses that have been researched. To make this task a little easier, analysis of research papers is an important pursuit to save time and resources.

The Paper-Analyzer Group aims to facilitate knowledge extraction from scientific (biomedical) papers via Deep Learning models for Natural Language Processing. The core of the Paper-analyzer is a Language Model built with transformer-like architectures fine-tuned to work with scientific papers. The objective of the Language Model is to predict the next word, given the previous context. Models built on top of the Language Model can be trained to solve several downstream tasks like Named Entity Recognition, Relation Extraction, and Question Answering. The group also experiments with generative models for paper summarization and sentence paraphrasing. All of this is working toward their main final goal, which is automatic knowledge extraction.

Back to the list of Research Groups

Cryptographic Lab

Security is an important subject in today’s modern world, and with more and more information becoming digitized there is an ever-increasing need to ensure that information is securely stored and maintained.

The Cryptographic Lab focuses its research on the modern problems of cryptography and information security. It works in partnership with COSIC – Computer Security and Industrial Cryptography group – in Leuven (Belgium), the Selmer Center in the University of Bergen (Norway), and the University of Paris and INRIA (France). Their research areas include cryptographic Boolean functions, symmetric ciphers, lightweight cryptography, blockchain technologies, quantum cryptography, and information security. As well as publishing monographs and articles in top cryptographic journals they teach crypto courses at the Novosibirsk State University and organize the renowned NSUCRYPTO International Olympiad in Cryptography.

Back to the list of Research Groups

HoTT and Dependent Types Group

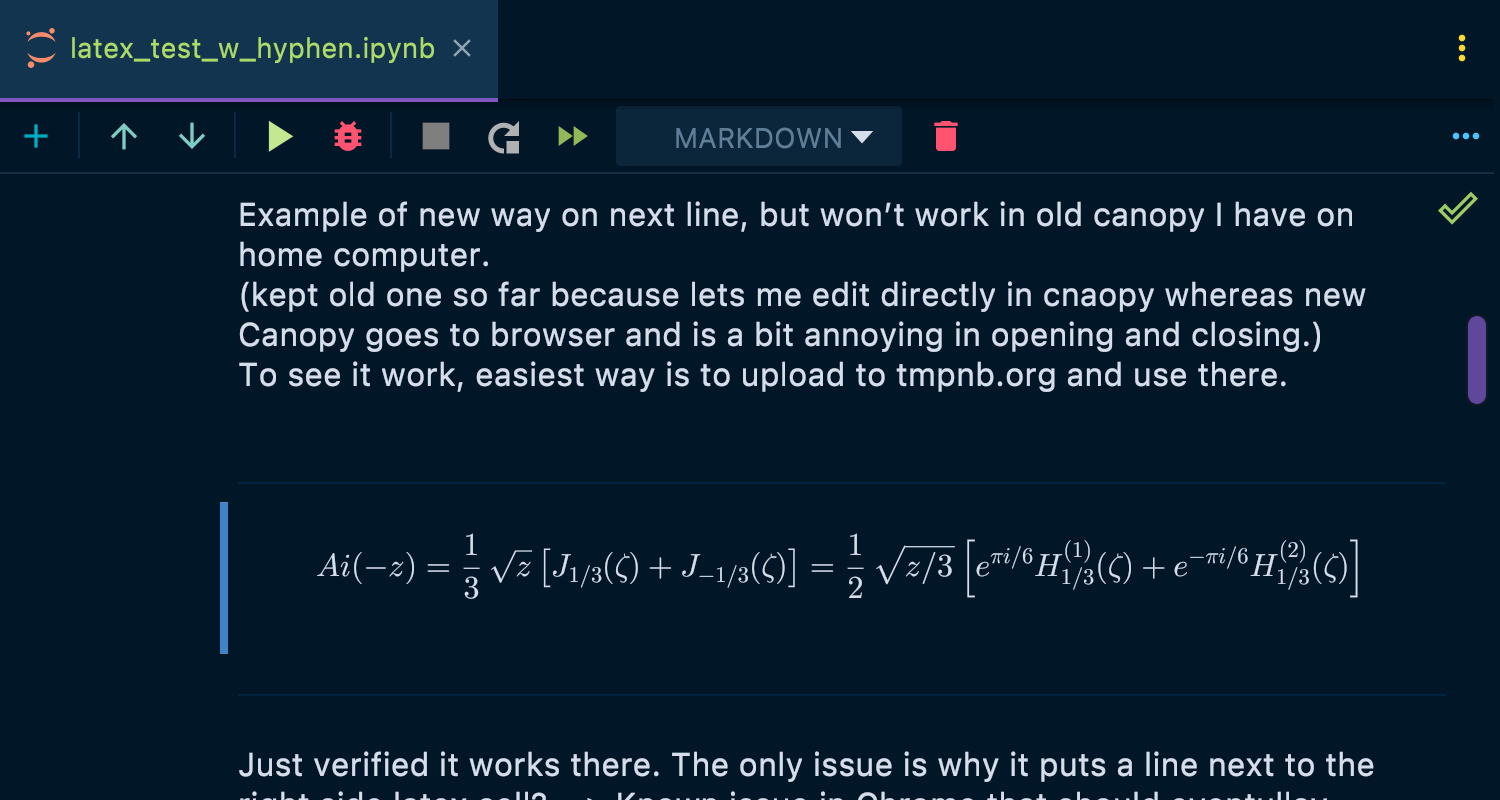

Homotopy type theory is a relatively new branch of mathematics combining several different fields. Mathematics needs a solid basis of proof to be established – as Einstein once said, “No amount of experimentation can ever prove me right; a single experiment can prove me wrong.” Given the immense complexity of mathematics, this is a huge and important undertaking.

The main focus of the HoTT and Dependent Types group is to build Arend, a dependently typed language and a theorem prover based on Homotopy Type Theory. HTT is a more advanced theoretical framework than those on which systems like Agda and Coq are based. The ultimate goal is to create an online collaborative proof assistant based on a modern type theory to enable the formalization of certain branches of mathematics.

Back to the list of Research Groups

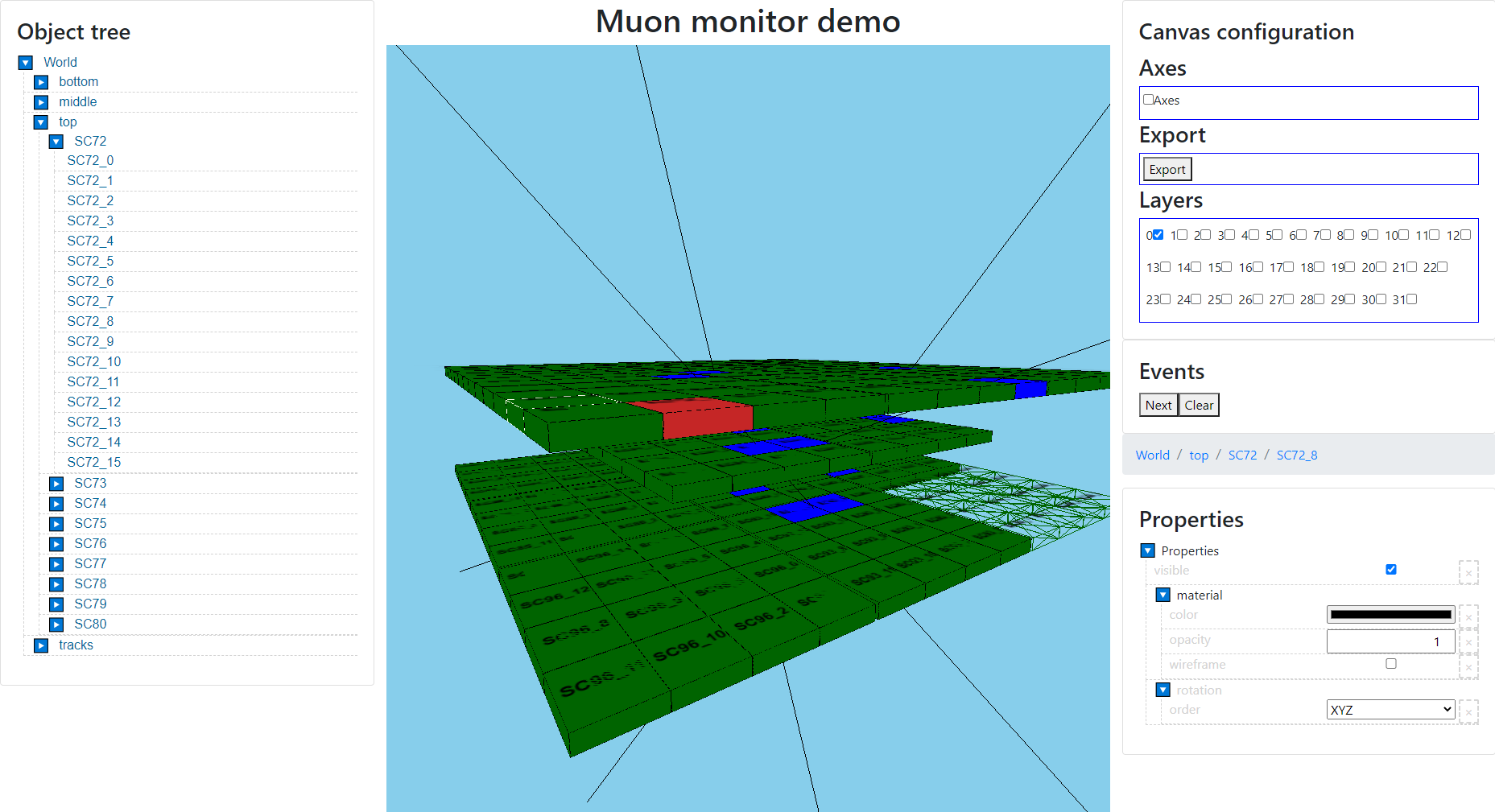

Nuclear Physics Methods Laboratory

Nowadays, many important tasks in particle physics, including numerical simulation and analysis of experimental data, rely heavily on software to ensure that experiments are reproducible and the results are reliable.

The Nuclear Physics Methods lab is based at MIPT in Moscow with its main interest being methodology and software for particle physics. The current focus of the lab’s software group is the design of a new generation of data acquisition (slow control, signal processing) and analysis tools. Their research is focused in three areas: non-accelerator particle physics (GERDA, Troitsk nu-mass, KATRIN, and IAXO experiments); numerical simulation in particle physics (both accelerator and non-accelerator experiments, atmospheric discharge phenomena, and x-ray physics); and software development for experimental physics (data acquisition and analysis systems, infrastructure projects and scientific libraries for Kotlin language). The lab is also very focused on education; they aim to provide younger students with real-life (and real science) experience in physics and software development.

Back to the list of Research Groups

Learning Research Lab

The future of technology is in the hands of the next generation of engineers. So it is in all our collective interests to give them the best possible start in their careers. We believe that excellence begins with education, and so we feel that furthering the science of learning is an important pursuit.

The Learning Research lab pursues the goal of developing interventions to promote STEM subjects among high school students and increase their chances of succeeding and furthering their professional careers in STEM-related fields. The Learning Research lab is working on a longitudinal research project to discover the main predictors of students’ success in programming and STEM (science, technology, engineering, and mathematics) subjects. They are looking at the interplay of four possible factors: cognitive skills, non-cognitive characteristics (educational and professional attitudes, social environment), gender, and learning methodologies. They want to answer the following questions:

- Who chooses STEM and programming majors?

- What characteristics (cognitive abilities, family background, etc.) lead to higher achievements and lower drop-out rates?

- Are there attitudinal characteristics (like motivation and engagement) that can counterbalance background effects?

- What learning methodologies lead to success, and what increases the chances of failure?

Back to the list of Research Groups

Mobile Robot Algorithms Laboratory

Self-driving cars are already a reality and autonomous vehicle prototypes are currently changing the landscape of the roads and the future of driving. The technology is still in its early days, and there is much more that can be improved in autonomous systems.

The Mobile Robot Algorithms Laboratory’s research is focused on developing efficient algorithms for mobile robots. The lab hosts the only instance of a Duckietown, a platform and environment for the development of mobile robot algorithms, in Russia. The main problem of interest for the lab is Simultaneous Localization and Mapping (SLAM). SLAM consists of constructing and maintaining a map of an unknown environment, while keeping track of the agent’s location in the environment, by analyzing data from various sensors. The complexity of the SLAM problem is rooted in the noise that is inherent to physical sensors, and in the need to keep track of changes in a dynamic environment. On top of that, many SLAM algorithms are designed to run on low-cost hardware, which imposes strict performance limitations. In 2019, the Robotics lab took part in 3 AI Driving Olympics, a competition of autonomous driving robots and a prestigious venue for the improvement of self-driving vehicle research. They came first in all 3 competitions. Notably, this was the first time the competition was won by a deep-learning algorithm.

The lab’s researchers teach a variety of STEM courses in universities, and offer mobile software development courses to school students and host visiting students from MIT via the MISTI program.

Back to the list of Research Groups

Optimization Problems in Software Engineering

JetBrains tools are developed to help our users be more productive and write better code. Behind the scenes, there is a lot of research and testing to make sure that we are creating the best products we can. It may come as no surprise that we have research labs dedicated to software engineering fields.

The Optimization Problems in Software Engineering group’s research mainly focuses on solving hard optimization problems arising in the areas of reliable systems engineering, grammatical inference, and software verification. The research primarily focuses on the synthesis of finite automata models from specifications such as execution traces and test cases.

The primary research projects include: finite-state machine inference with metaheuristic algorithms; synthesis, testing, and verification of industrial automation software; metaheuristic algorithms parameter tuning; and constraint programming for graph and automata problems.

Back to the list of Research Groups

Parameterized Algorithms Group

There is always something new to learn in computer science, and solving challenging complex issues using the most up-to-date techniques keeps us advancing.

The Parameterized Algorithms group lab is dedicated to researching and solving computationally challenging problems using modern techniques of designing exact algorithms. It often involves establishing connections between different problems and investigating how the complexity of a particular problem changes on specific classes of problem instances, such as instances having bounded parameter values. The lab runs several research projects that focus on problems such as the maximum satisfiability, graph coloring, and graph clusterization. While these problems, in some cases, are defined by the bounded parameters of these problems, there exist algorithms with reasonable complexity, which makes their computation feasible even for larger inputs.

Back to the list of Research Groups

Concurrent Computing Lab

Concurrent programming has gained popularity over the past few decades. Every language and platform provides the corresponding primitives, which become harder and harder to use in the most efficient way as system complexity increases, such as with multiple NUMA nodes, as well as with relaxations of memory models.

This raises several important practical questions. How can we build efficient concurrent algorithms nowadays? What is the best trade-off between progress guarantees, efficiency, and fairness? How do we check all these algorithms for correctness? How do we benchmark them? While some of the questions are partially answered in academia, a lot of the practical problems remain unresolved.

The primary focus of the Concurrent Computing Lab is to answer the important questions this raises – ‘How can we build efficient concurrent algorithms nowadays?’ and ‘What is the best trade-off between progress guarantees, efficiency, and fairness?’ – by providing practically reasonable and theoretically valuable solutions as well as high-quality tools that can help other researchers and developers in the field of concurrency. The topics the lab is interested in include: concurrent algorithms and data structures; non-volatile memory (NVM); testing and verification; performance analysis, debugging, and optimization; parallel programming languages and models; and memory reclamation.

Back to the list of Research Groups

Cyber-Physical Systems Lab

There are a lot of challenges in the development of embedded and cyber-physical systems that come from the different design practices used by mechanical and software engineering. There are huge opportunities for research into this area as the embedded systems are so important across so many industries.

The Cyber-Physical Systems Lab research interests include process-oriented programming, software psychology and domain-specific languages for control software (cyber-physical systems, PLCs, embedded systems, IIoT, distributed control systems, etc.), safety-critical systems, requirement engineering, formal semantics, and dynamic and static verification (model checking, deductive verification, ontological design).

Back to the list of Research Groups

Machine Learning Methods in Software Engineering

Machine learning has come a long way and has various incredibly useful applications. It is also possible to apply machine learning to the realm of software engineering to enhance the capabilities of software engineering tools.

The Machine Learning Methods in Software Engineering group is focused on devising and testing techniques to improve software engineering tools and processes by applying data analysis techniques, including machine learning, to data found in software repositories. They collaborate with several product teams at JetBrains on integrating state-of-the-art, data-driven techniques into the company’s products. The group is currently working on over a dozen research projects on a variety of topics ranging from supporting data mining libraries to generating code from natural language descriptions. Recent results of the group include a new approach to the recommendation of move method refactorings, a study of license violations in borrowed code on GitHub, a state-of-the-art approach to source code authorship attribution, and a method to build vector representations of coding style without explicit features.

Back to the list of Research Groups

Programming Languages and Tools Lab

Programming language theory is another vested interest of JetBrains, both from the point of view of the tools we produce and because we have our own language, Kotlin.

The Programming Languages and Tools lab was established to carry out scientific research in the area of programming language theory. It is a joint initiative between JetBrains and the Department of Software Engineering at the Faculty of Mathematics and Mechanics of Saint Petersburg State University. The lab covers a broad range of research topics including the theory of formal languages and its applications to parsing, static code analysis, graph database querying, bioinformatics, and other fields: formal programming language semantics and, in particular, the semantics of weakly consistent memory models; formal verification techniques based on theorem provers and SMT solvers; program optimization methods based on partial evaluation and supercompilation; and various programming paradigms, including functional, relational, and certified programming. The lab is also very active outside its research, hosting annual winter and summer schools, weekly global seminars, and post-graduate internships among many other initiatives.

Back to the list of Research Groups

Verification or Program Analysis Lab aka VorPAL

Improving day to day productivity is high on the list of things that the tools we create at JetBrains should help with. They could not have gotten to where they are today without the dedicated efforts and research put into them by our research labs.

At the Verification or Program Analysis lab, students, postgraduates, and researchers develop software technologies based on formal methods, such as verification, static analysis, and program transformation techniques. These methods are used to improve day-to-day developer productivity as standalone tools, programming language extensions, or IDE plugins.

A significant part of the current research efforts is invested into exploring various ways of extending Kotlin. We believe that Kotlin still has a lot of room for further improvements and extensions. These improvements include things such as macros, liquid types, pattern matching, and variadic generics. Other research areas this lab is involved in include (but are not limited to) applying concolic testing to Kotlin and various compiler fuzzing techniques.

Back to the list of Research Groups

Intelligent Collaboration Tools Lab

Coding is not the only activity software engineers are involved in these days. Software engineers now need to spend a considerable amount of time exchanging information and collaborating with colleagues. Working in software engineering increasingly relies on specialized tools like communication engines, issue trackers, and code review platforms to make collaboration between teams possible.

The goals of the new Intelligent Collaboration Tools Lab are to gain a deeper understanding of collaborative processes in software engineering and other creative industries, and to devise novel approaches to tool support for collaborative work.

Back to the list of Research Groups

If you are interested in joining any of the groups or setting up a collaborative project, please contact the lab leads directly. For general questions please email info@research.jetbrains.org

Many thanks to Olga Andreevskikh for her valuable help with preparing this post.