.NET Tools

Essential productivity kit for .NET and game developers

Creating Custom AI Prompts

AI has swept through the software development industry like a wildfire. So people want to learn how to best use AI in their day to day tasks. In this post we’ll take a look at how to write custom prompts for use with the JetBrains AI Assistant in ReSharper and Rider so you can make the most of AI.

Prompts: Making AI work well

Prompts are a way to formulate queries and commands for an AI so it can generate the expected results. AI uses NLP (Natural Language Processing) in prompts so that they feel conversational, just like humans having a conversation. AI tools accept your queries and send them to a LLM (Large Language Model), which returns a response to you.

As developers, we know computers do exactly what you tell them. So when we code things that don’t match the user requirements, it’s called a bug. It’s not because of the computer but the programmer – the computer did exactly as instructed. Similarly, when you prompt an AI you must be specific. Your queries and commands must state all pertinent information and instructions precisely in order for AI to complete the task you want. The most important aspect to making great prompts is ensuring that the requirements, instructions, or queries are absolutely clear.

One aspect of clarity is context, because AI tools rely heavily on context to complete their duties. Context is your current project – it is all the files and assets your project contains. Additionally, included as part of that context is the chat history and general knowledge within AI about the problem space. From there, you may include other contextual information in the prompts themselves. For example, you may wish to repeat a previous command or use information from an earlier part of the conversation. Building prompts in a conversational tone where one builds on another is called “interactive prompting”.

Let’s say you want to participate and compete in a sport, perhaps tennis. But you never even held a tennis racket before. You could ask “How do I enter a national tennis tournament?” because that’s your end goal. But the AI might assume the same as a human might: such a question could suggest you already play tennis and have competed previously in local tournaments. If the AI makes this assumption, then the data returned might simply be about how to register for the tournament, but not how to learn the skills to play at a competitive level. This kind of surrounding context is helpful in getting better results. So rephrasing this query “I want to compete in tennis. I have never played tennis before. What steps should I take to learn how to play tennis well enough to compete in a national tournament?” should yield better results as it provides more relevant background information. Indeed, AI is a lot like humans that way – because AI is trained on data provided that’s based on human interactions.

A good way to build context is by challenging the AI. Ask it “Why do you think that?” or “What evidence supports your answer?” to see what the results are. A good takeaway is to always be suspicious of the answers – AI can hallucinate and just make things up. Some LLMs return some really wordy responses, so requesting that the LLM keep responses short can help provide clarity. Additionally, you can correct and clarify prompts based on how the AI answered previously. If it’s misinterpreting you, you may be able to just tell it what it missed and continue on.

Create a basic prompt

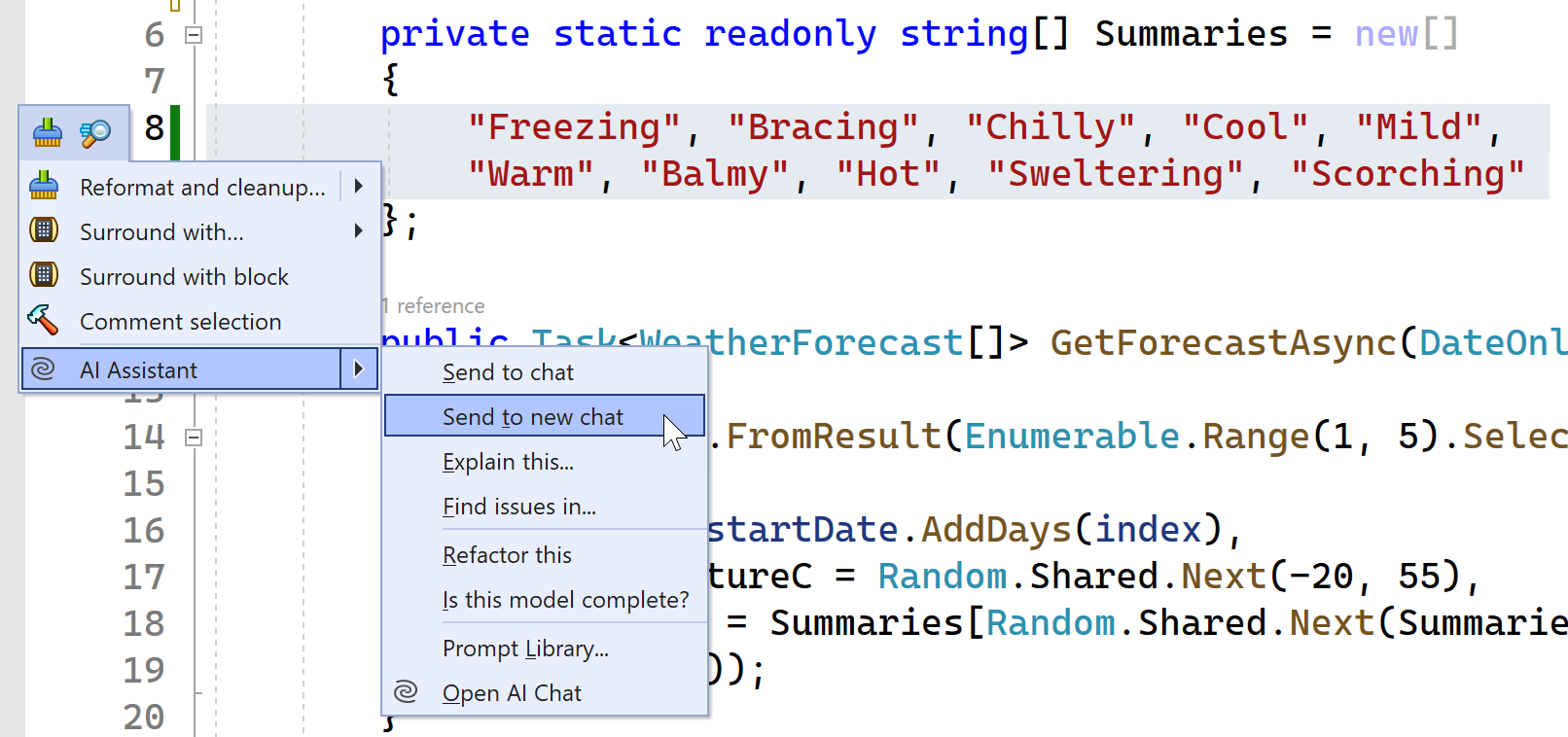

When you’re ready to prompt, there are a few approaches. Open the ReSharper AI Assistant and start chatting. Alternatively, you can invoke the AI Assistant from a highlighted block of code, as shown here:

Choosing Send to new chat creates a context for the chat and sends the highlighted code to the AI Assistant chat. Add your details to the prompt and send it. When you get a reply, you can use interactive prompting to achieve your task.

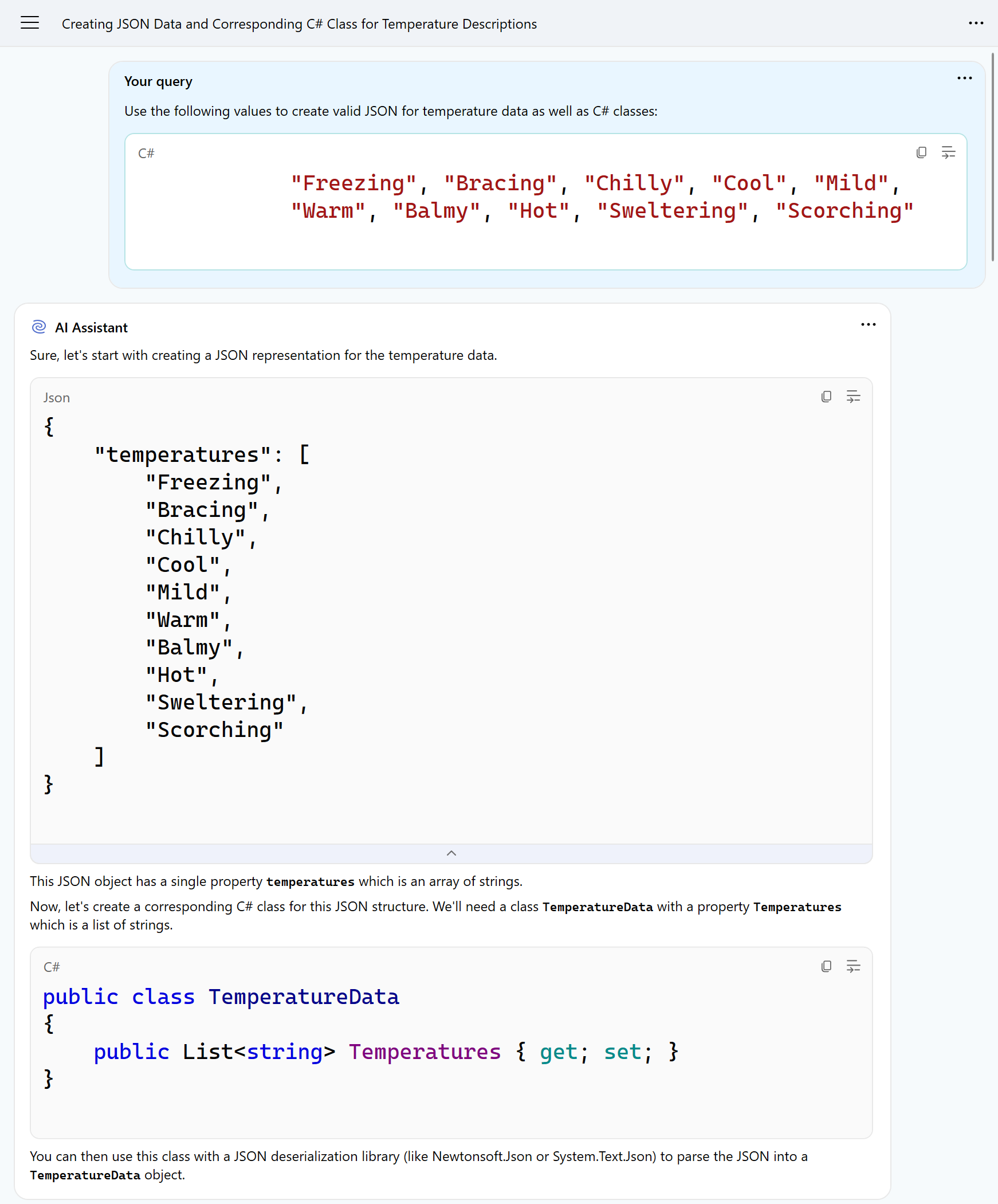

In the following example, the AI Assistant was asked to create both valid JSON and C# classes from the string of values. As you can see, the response is very basic. The JSON is equivalent to a simple array, and the class contains a List object to hold those values.

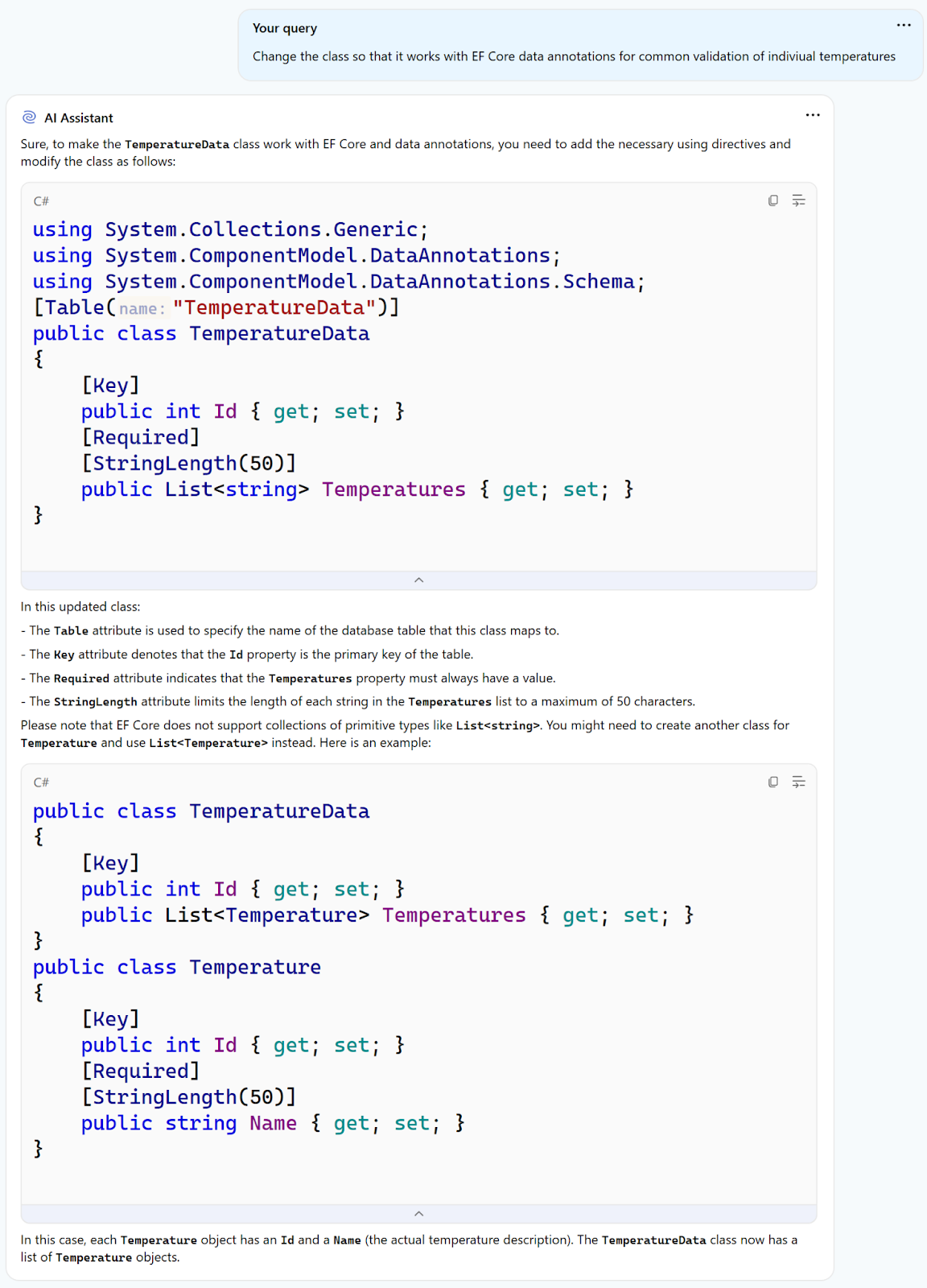

Very likely, we want our models to more closely resemble the actual data structures we work with. So with interactive prompting, we can add some instructions to make our class more amenable for Entity Framework, for example, as shown below:

Please note that like the AI chat suggests, earlier versions of EF Core do not support collections of primitive types. But EF Core 8 does. This is a great example of where your expertise intersects with prompting. Relying on results without proper review means that someone could try to apply this code to an older codebase where it wouldn’t work. Always carefully review the replies from any AI tools and make a professional judgment call rather than simply accepting what’s returned.

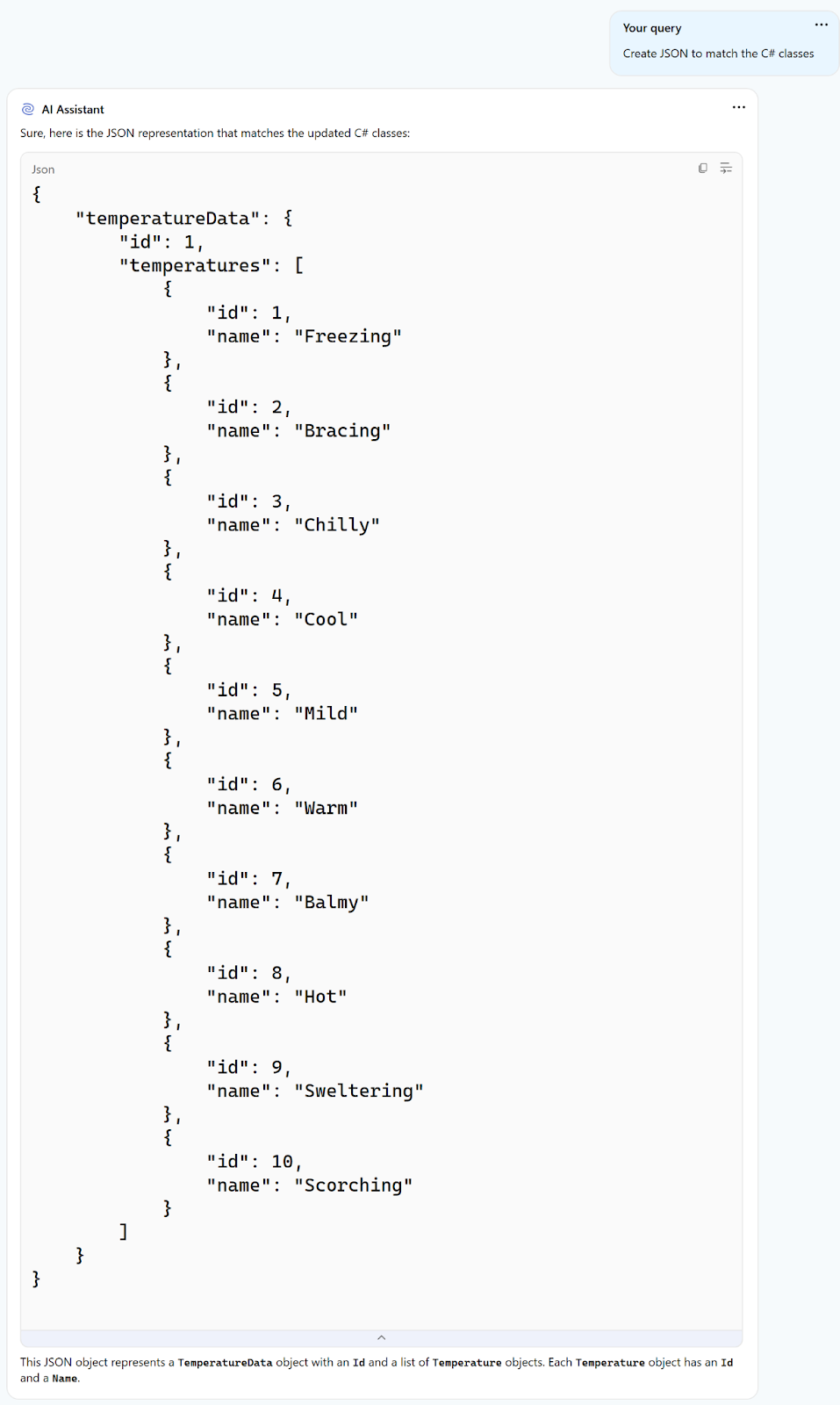

You may continue with the prompts to ask it to change the JSON so it’s more like the model. It replies with a list of temperatures in an array of temperature data.

From here you can continue to modify the prompts based on the results until you get exactly what you want.

Important note: AI Assistant Tool Window does not make any changes in the editor. When you use actions that produce code, that code is shown in the AI Assistant window. You must create any files needed and copy the output where appropriate.

Save custom prompts

Now that you’re creating great prompts, you might want to save some of them for repeated use. They’re great for building context to advanced queries as well. Both ReSharper and Rider have a prompt library to store custom prompts. Custom prompts saved in your prompt library are a great way to keep “AI macros” for common tasks, such as:

- Onboarding instructions

- Code explanations

- Moving and transforming data or code

- Write or clarify commit messages

- So much more…

Several actions in the IDE have an option to save a prompt to the library. For example, using the AI Assistant through Alt+Enter reveals some prompts that are immediately available, such as:

- Explain this…

- Find issues in…

- Refactor this…

- Is this model complete?

You can customize these prompts and add the enhanced versions to the prompt library, or create your own from prompts you’ve already made. To create a prompt, use the $SELECTION token to represent the block of code that the AI assistant should work with as a placeholder for that part of the query that’s is sent to the LLM.

While making your custom prompt, you can use AI to further enhance it. Think of it as “Promptception”. Once your team has a nice set of useful prompts you can automate some tasks that might otherwise be done manually, such as creating classes based on JSON or some database schema, generating tests, or any work you’d like to offload.

Conclusion

AI is here and developers need to integrate it into their daily processes both for individuals and for teams. Using the JetBrains AI Assistant is a fast way to be able to use AI effectively and accurately in your code. Remember, it’s all about two things: the prompts and accurately judging if the output you get is credible. If you craft prompts that aren’t legible or understandable by humans, they’re probably not understandable by AI either. You probably won’t get good results. Similarly, if AI replies with some bogus info, you must be able to detect that as well. Those are the real skills when it comes to AI, so develop them, and with the help of JetBrains AI Assistant there are endless possibilities.